For a while now, Edge computing on the Raspberry PI has experienced a bit of ups and downs, especially with the trend of featuring AI in everything. The Raspberry Pi most times does not function properly with any reliable AI applications. A typical object detection on the Raspberry Pi would produce about 1 – 2 fps depending on the type of model, and this is because all those processing is done on the CPU. However, the poor performance of AI applications on the Raspberry Pi, has recently being addressed through AI Accelerators. One of this Accelerators is the Intel Neural Compute Stick 2, which is capable of somewhere around 8 – 15 fps depending on your application. The NCS2 is based on the Myriad X VPU technology, and this powerful AI module for edge computing called DepthAI. Essentially, DepthAI is an embedded platform for combining Depth and AI.

DepthAI’s idea was conceived by Luxonis, and it was Initially conceived from the need to improve bike safety of creating an artificial intelligence bike light that detects and prevents crashes from behind. It can also be used for health and safety, agriculture, food processing, manufacturing, mining etc. DepthAI functions by combine depth perception, object detection (neural inference), and object tracking, enabling power in a simple, easy-to-use Python API. The company says:

”Our ultimate goal is to develop a rear-facing AI vision alert system for cyclists that can help prevent them being hit by cars. On the path to building this, we developed hardware around the Myriad X, which we think could have huge value for makers, micro-factories, builders, and anyone who needs any combination of disparity depth and AI running in real time.”

The core of the device is the Myriad X SOM, the same module powering the Intel Neural Compute Stick 2. We should note that some of the capabilities of the Myriad aren’t being utilized; for example, from the image below, the stereo lanes are unused, and also some other features. However, by means of custom integration, DepthAI unlocks the full power usage of the Myriad X, and utilizes the four trillion-operations-per-second vision processing capability of the Myriad X.

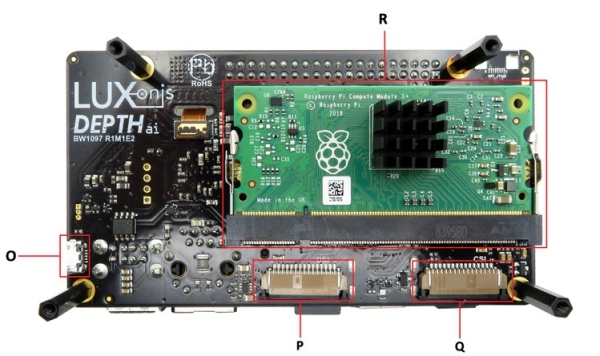

Object localization in the physical world can be carried out on the module at 25FPS, where the x, y, z (cartesian) coordinates in meters can be achieved. The attachment of two MIPI cameras sparse apart enables this feature. Additionally, it comes with an accompanying RGB Camera. Apart from the module, DepthAI is also available in 3 variants: A fully-integrated solution with on-board cameras that functions upon boot-up for easy prototyping Powered by the Raspberry Compute Module 3+, Raspberry Pi HAT with modular/remote cameras, and USB3 interface that’s usable with any host.

The Raspberry Pi Compute Module Edition is a complete edition. It comes with everything you need: pre-calibrated stereo cameras on-board with a 4K, 60 Hz color camera, and a microSD card with Raspbian and DepthAI Python code automatically running on bootup. The Pi HAT Edition enables users to attach the module to the Raspberry Pi which has a HAT, and the camera is mounted on the HAT itself. The USB3 Edition however, will allow you to use DepthAI with any platform via only a single USB connection. DepthAI enables Object Localization, Object Detection, Depth Video or Image, Color Video or Image, Stereo Pair Video or Image features. Just like the NCS2, DepthAI functions with OpenVINO for optimizing neural models. This enables you to train models with any popular frameworks such as TensorFlow, Keras, etc, then use OpenVINO to optimize them to run efficiently and at low latency on DepthAI/Myriad X.

Read more: DEPTHAI ENABLES REAL TIME DEPTH VISION TO THE RASPBERRY PI