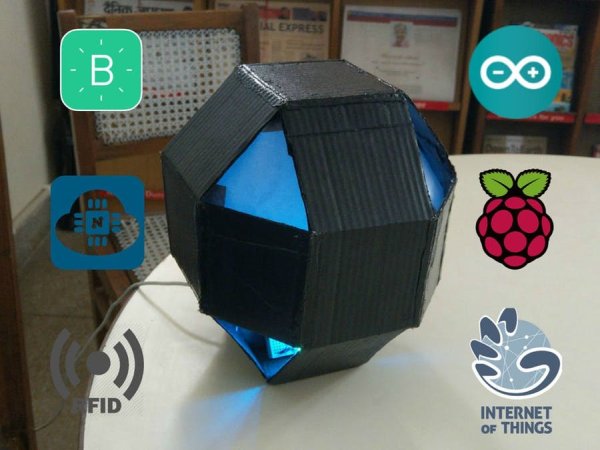

Octopod, a uniquely shaped full automation system that allows you to monitor your industry and keep security with AI and smart RFID locks.

Things used in this project

Hardware components

Arduino UNO & Genuino UNO

Anduino Arduino MKR WiFi 1010

Either this or any other Wifi ESP8266/ ESP32 This was not available in my country, So I went with NodeMCU ESP8266

Maxim Integrated MAX32630FTH

You can choose between MAX32620FTHR, Arduino 1010 or any ESP8266 Board. with this board you will need external WiFi Module or a Esp8266 chip for Interent

Raspberry Pi Zero Wireless

You can use normal Raspi 2/3 too!

DHT11 Temperature & Humidity Sensor

Seeed Grove – Gas Sensor(MQ2)

SparkFun Soil Moisture Sensor (with Screw Terminals)

PIR Motion Sensor (generic)

Optional

RFID reader (generic)

Relay (generic)

2 channel preferably

RGB Diffused Common Cathode

Raspberry Pi Camera Module

Raspberry Pi Camera Module

Buzzer

HC-05 Bluetooth Module

Optional

LED (generic)

Wall Adapter/ Power Bank

Memory Card

more than 4 Gb and preferably Class 10 (Required for Raspberry Pi OS)

Software apps and online services

Blynk

OpenCV

Hand tools and fabrication machines

Hot glue gun (generic)

3D Printer (generic)

Optional

Hand Tools

Needle Nose Pliers, Scissors,Cutter etc

Story

A Short Video Demonstration

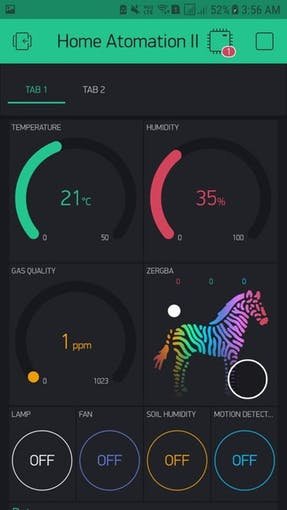

There are many IoT automation projects out there, but trust me there is nothing like this! Octopod is made using NodeMCU (MAX32620FTHR or Arduino MKR 1010), Arduino Uno, and Raspberry Pi 3. Octopod allows you to make your home smart. Octopod sends you a variety of data like temperature, humidity, and gas quality inside your home/office/ industry. Octopod sends you notification whenever it detects any sort of motion inside and tells you when you need to water your plants. You can also control your appliances through a Blynk application on your smartphone. Octopod even enables true mood lighting!

Octopod is equipped with a tiny little camera, which sends you live feed. This camera also uses artificial intelligence to detect humans in its sight and sends you their pictures. In addition, it features an RFID door locking system! Awesome, right?

Human Detection using Rpi Using System

How Everything Works?

The NodeMCU is connected to a bunch of sensors, a relay module and RGB LEDs. It is connected to Blynk app on a smartphone via WiFi, which sends all the data and allows you to control your home.

Raspberry Pi is also connected to WiFi, which lets you see the live feed via the Pi Camera. We have also installed OpenCV libraries on the Pi, and configured the Pi to detect any human beings in its sight and email you their images.

The smart door unit uses an RFID module. When the permitted RFID is brought within its range, it automatically opens the door.

STEP 1: Coding Main Octopod

I have added comments on almost every line, so you don't only copy but you understand. Here, I will tell you what actually happens when the code is executed in a nutshell!

- Including the Libraries:

This code uses 2 main libraries, the Blynk Library to make the code compatible to the Blynk Application and the the other library is the DHT11 Temperature Library, which converts the raw data from the sensor into Temperature and Humidity. To download these libraries, just go to the given links in the code and download them. Then head to Arduino IDE → Sketch → Include library → Add .zip library, and select your downloaded libraries.

#include //Include Blynk Library

#include //Include Blynk Library

#include //Include DHT sensor library

#define BLYNK_PRINT Serial

This is some Blynk code that helps you connect your nodemcu to the internet and then authenticate it to your app.

// You should get Auth Token in the Blynk App.// Go to the Project Settings (nut icon).char auth[] = “Your Auth Key”;// Your WiFi credentials.// Set password to “” for open networks.char ssid[] = “Your WiFi SSID”;char pass[] = “Your WiFi Pass”;

- Defining Pins and Integers:

In this segment we define the pins of our various sensors. You can change them as per your convince. We also define some Integers that we tend to use during the course of our code.

#define DHTPIN 2 // What digital pin temperature and humidity sensor is connected to#define soilPin 4 // What digital pin soil moisture sensor is connected to#define gasPin A0 // What analog pin gas sensor is connected to#define pirPin 12 // What digital pin soil moisture sensor is connected to int pirValue; // Place to store read PIR Valueint soilValue; // Place to store read Soil Moisture Valueint PIRpinValue; // Place to store the value sent by Blynk App Pin V0int SOILpinValue; // Place to store the value sent by Blynk App Pin V1

- BLYNK_WRITE() :

With this code we tell the Blynk app that it can use Pin V0 and Pin V1 to tell the code if Motion Detection and Soil Moisture test are turned ON.

BLYNK_WRITE(V0) //VO pin from Blynk app tells if Motion Detection is ON{ PIRpinValue = param.asInt(); } BLYNK_WRITE(V1) //V1 pin from Blynk app tells if Soil Moisture is ON{ SOILpinValue = param.asInt(); }

- void sendSensor() :

This code takes the data from DHT11 make it use able, and then sends it to Pin V5 and V6 respectively.

void sendSensor(){ int h = dht.readHumidity(); int t = dht.readTemperature(); // or dht.readTemperature(true) for Fahrenheit if (isnan(h) || isnan(t)) { Serial.println(“Failed to read from DHT sensor!”); // to check if sensor is not sending any false values return; } // You can send any value at any time. // Please don't send more that 10 values per second. Blynk.virtualWrite(V5, h); // send humidity to pin V5 Blynk.virtualWrite(V6, t); // send temperature to pin V7}

- void getPirValue() & void getSoilValue() :

Reads the digital value from the sensors, then it runs an if- else condition to check the state of the sensor. If the sensor is in required state, it pushes a notification from the Blynk App.

void getPirValue(void){ pirValue = digitalRead(pirPin); if (pirValue) //digital pin of PIR gives high value on human detection { Serial.println(“Motion detected”); Blynk.notify(“Motion detected”); }}void getSoilValue(void){ soilValue = digitalRead(soilPin); if (soilValue == HIGH) //digital pin of soil sensor give low value when humidity is less { Serial.println(“Water Plants”); Blynk.notify(“Water Plants”); }}

- void setup() :

In the setup we do a couple of things that are only meant to be done once. Like: Starting the serial communication at a fixed Baud Rate, Authorize this code to the Blynk application, beginning the Dht sensor readings, then tweeting to your twitter handle that your Smart Home Project is Online, then telling the node that Pir Pin and Soil Sensor Pin is meant to take Input only.

void setup(){ // Debug console Serial.begin(9600); Blynk.begin(auth, ssid, pass); // You can also specify server: //Blynk.begin(auth, ssid, pass, “blynk-cloud.com”, 8442); //Blynk.begin(auth, ssid, pass, IPAddress(192,168,1,100), 8442); dht.begin(); // Begins DHT reading Blynk.tweet(“OCTOPOD IS ONLINE! “); // Tweating on your Twitter Handle that you project is online pinMode(pirPin,INPUT); // Defining that Pir Pin is meant to take Input Only pinMode(soilPin,INPUT); // Defining that Soil Sensor Pin is meant to take Input Only // Setup a function to be called every second timer.setInterval(1000L, sendSensor);}

- void loop() :

In the loop we write things that are to be done over and over. Here, we make sure that the code that we wrote before setup runs. Then, we write 2 If- Else Statements that check the states of the Pin V0 and Pin V1 and then take the values from the sensors accordingly.

void loop(){ Blynk.run(); timer.run(); if (PIRpinValue == HIGH) //VO pin from Blynk app tells if Motion Detection is ON { getPirValue(); } if (SOILpinValue == HIGH) //V1 pin from Blynk app tells if Soil Moisture is ON { getSoilValue(); } }

STEP 2: Coding the RFID Smart Lock

To be honest this is a simple and easy Code and doesn't need much explanation. But, I will still tell you in a nutshell what this does. There are two versions of the code, one is if you want to connect the door unit box to Bluetooth so it tells you when your door is open via the serial terminal. Other sends to serial so it can be viewed if you connect your Arduino to your computer. I prefer simple without Bluetooth version though . So here we go!

- Go to Sketch → Include Library → Manage Library → Type in the search bar MFRC522 and install the library. Then go File → Examples → Custom Libraries → MFRC522 → dumpInfo Sketch. In the starting you can read how to connect pins (Or refer the picture). Then run the code and open serial monitor and bring one your Rfid Card in front of the MFRC522 Module and wait for 5 second. Then, note the card UID in a similar manner note the UID's of your other cards and Key Chains.

- Then download which ever code you like. Open the code and go to this line. Here in place of these X's add the UID of the card that you want to use to open the door. Now you are ready, just upload the code.

if (content.substring(1) == “XX XX XX XX”) {

}

In this code there are two main things that we do, that is in If- Else part of the code. In if we tell the arduino that if the the UID of the card matches to the UID mentioned make the Servo move (So that the Door Opens) and blinks some Led's and make some sounds by using the buzzer. Else if the UID's don't make blink some led's and make some sounds by using the Buzzer.

STEP 3: Raspberry Pi Human Detection AI Setup

In this guided step we are going to learn how to make a Smart Security Camera. The camera will send you An Email whenever it detects the object and If you are on the same WiFi network you can access the live footage by the camera by typing the IP address of your Raspberry Pi. I will show you how to create the smart camera from scratch. Let's go!

Requirements :

1. OpenCV (Open Source Computer Vision Library)

2. Raspberry Pi 3B

3. Raspberry Pi Camera V2

Assumptions:

1. Raspberry Pi 3 with Raspbian Stretch installed. If you don’t already have the Raspbian Stretch OS, you’ll need to upgrade your OS to take advantage of Raspbian Stretch’s new features.

To upgrade your Raspberry Pi 3 to Raspbian Stretch, you may download it here and follow these upgrade instructions (or these for the NOOBS route which is recommended for beginners).

Note: If you are upgrading your Raspberry Pi 3 from Raspbian Jessie to Raspbian Stretch, there is the potential for problems. Proceed at your own risk, and consult the Raspberry Pi forums for help. Important: It is my recommendation that you proceed with a fresh install of Raspbian Stretch! Upgrading from Raspbian Jessie is not recommended.

2. Physical access to your Raspberry Pi 3 so that you can open up a terminal and execute commandsRemote access via SSH or VNC. I’ll be doing the majority of this tutorial via SSH, but as long as you have access to a terminal, you can easily follow along.

I finished this project almost in 5 Hrs. Installation Of Open CV took almost 3 Hrs.

- Step 1: ATTACHING CAMERA TO RASPBERRY PI 3

1. Open up your Raspberry Pi Camera module. Be aware that the camera can be damaged by static electricity. Before removing the camera from its grey anti-static bag, make sure you have discharged yourself by touching an earthed object (e.g. a radiator or PC Chassis).

2. Install the Raspberry Pi Camera module by inserting the cable into the Raspberry Pi. The cable slots into the connector situated between the Ethernet and HDMI ports, with the silver connectors facing the HDMI port.

3. Boot up your Raspberry Pi.

4. From the prompt, run “sudo raspi-config”. If the “camera” option is not listed, you will need to run a few commands to update your Raspberry Pi. Run “sudo apt-get update” and “sudo apt-get upgrade”

5. Run “sudo raspi-config” again – you should now see the “camera” option.

COMMAND-

$ sudo raspi-config

6. Navigate to the “camera” option, and enable it (lookout in interfacing option) . Select “Finish” and reboot your Raspberry Pi or just type the following :

$ sudo reboot

- Step 2 : OPEN CV INSTALLATION

If this is your first time installing OpenCV or you are just getting started with Rasbian Stretch. This is the perfect tutorial for you.

Step #1: Expand filesystem

Are you using a brand new install of Raspbian Stretch? If so, the first thing you should do is expand your filesystem to include all available space on your micro-SD card:

COMMAND-

$ sudo raspi-config

then select the “Advanced Options” menu item and Followed by selecting “Expand filesystem”. Once prompted, you should select the first option, “A1. Expand File System”, hit Enter on your keyboard, arrow down to the “” button, and then reboot your Pi. If you are using an 8GB card you may be using close to 50% of the available space, so one simple thing to do is to delete both LibreOffice and Wolfram engine to free up some space on your PI:

COMMAND-

$ sudo apt-get purge wolfram-engine

$ sudo apt-get purge libreoffice*

$ sudo apt-get clean

$ sudo apt-get autoremove

After removing the Wolfram Engine and LibreOffice, you can reclaim almost 1GB!

Step #2: Install dependencies

This isn’t the first time I’ve discussed how to install OpenCV on the Raspberry Pi, so I’ll keep these instructions on the briefer side, allowing you to work through the installation process: I’ve also included the amount of time it takes to execute each command (some depend on your Internet speed) so you can plan your OpenCV + Raspberry Pi 3 install accordingly (OpenCV itself takes approximately 4 hours to compile — more on this later). The first step is to update and upgrade any existing packages:

COMMAND-

$ sudo apt-get update && sudo apt-get upgrade

We then need to install some developer tools, including CMake, which helps us configure the OpenCV build process: Raspbian Stretch: Install OpenCV 3 + Python on your Raspberry Pi

COMMAND-

$ sudo apt-get install build-essential cmake pkg-config

Next, we need to install some image I/O packages that allow us to load various image file formats from disk. Examples of such file formats include JPEG, PNG, TIFF, etc.: Raspbian Stretch

COMMAND-

$ sudo apt-get install libjpeg-dev libtiff5-dev libjasper-dev libpng12-dev

Just as we need image I/O packages, we also need video I/O packages. These libraries allow us to read various video file formats from disk as well as work directly with video streams

COMMAND-

$ sudo apt-get install libavcodec-dev libavformat-dev libswscale-dev libv4l-dev

$ sudo apt-get install libxvidcore-dev libx264-dev

The OpenCV library comes with a sub-module named highgui which is used to display images to our screen and build basic GUIs. In order to compile the highgui module, we need to install the GTK development library: Raspbian Stretch: Install OpenCV 3 + Python on your Raspberry Pi

COMMAND-

$ sudo apt-get install libgtk2.0-dev libgtk-3-dev

Many operations inside of OpenCV (namely matrix operations) can be optimized further by installing a few extra dependencies:

COMMAND-

$ sudo apt-get install libatlas-base-dev gfortran

These optimization libraries are especially important for resource constrained devices such as the Raspberry Pi. Lastly, let’s install both the Python 2.7 and Python 3 header files so we can compile OpenCV with Python bindings: Raspbian Stretch

COMMAND-

$ sudo apt-get install python2.7-dev python3-dev

If you’re working with a fresh install of the OS, it is possible that these versions of Python are already at the newest version (you’ll see a terminal message stating this). If you skip this step, you may notice an error related to the Python.h header file not being found when running make to compile OpenCV. Step #3: Download the OpenCV source code

Step #3: Download the OpenCV source code

Now that we have our dependencies installed, let’s grab the 3.3.0 archive of OpenCV from the official OpenCV repository. This version includes the dnn module which we discussed in a previous post where we did Deep Learning with OpenCV (Note: As future versions of openCV are released, you can replace 3.3.0 with the latest version number):

COMMAND-

$ cd ~

$ wget -O opencv.zip https://github.com/Itseez/opencv/archive/3.3.0.zi…>>p$ unzip opencv.zip

We’ll want the full install of OpenCV 3 (to have access to features such as SIFT and SURF, for instance), so we also need to grab the opencv_contrib repository as well: Raspbian Stretch: Install OpenCV 3 + Python on your Raspberry Pi

COMMAND-

$ wget -O opencv_contrib.zip https://github.com/Itseez/opencv_contrib/archive/…>>3.3.0$ unzip opencv_contrib.zip

You might need to expand the command above using the “<=>” button during your copy and paste. The .zip in the 3.3.0.zip may appear to be cutoff in some browsers. The full URL of the OpenCV 3.3.0 archive is:https://github.com/Itseez/opencv_contrib/archive/… Note: Make sure your opencv and opencv_contrib versions are the same (in this case, 3.3.0). If the versions numbers do not match up, then you’ll likely run into either compile-time or runtime errors. Step #4: Python 2.7 or Python 3? Before we can start compiling OpenCV on our Raspberry Pi 3, we first need to install pip , a Python package manager

COMMAND-

$ wget https://bootstrap.pypa.io/get-pip.py>>>>

$ sudo python get-pip.py

$ sudo python3 get-pip.py

You may get a message that pip is already up to date when issuing these commands, but it is best not to skip this step. If you’re a longtime PyImageSearch reader, then you’ll know that I’m a huge fan of both virtualenv and virtualenvwrapper.

Installing these packages is not a requirement and you can absolutely get OpenCV installed without them, but that said, I highly recommend you install them as other existing PyImageSearch tutorials (as well as future tutorials) also leverage Python virtual environments.

I’ll also be assuming that you have both virtualenv and virtualenvwrapperinstalled throughout the remainder of this guide. So, given that, what’s the point of using virtualenv and virtualenvwrapper ? First, it’s important to understand that a virtual environment is a special tool used to keep the dependencies required by different projects in separate places by creating isolated, independentPython environments for each of them. In short, it solves the “Project X depends on version 1.x, but Project Y needs 4.x” dilemma.

It also keeps your global site-packages neat, tidy, and free from clutter. If you would like a full explanation on why Python virtual environments are good practice, absolutely give this excellent blog post on RealPython a read. It’s standard practice in the Python community to be using virtual environments of some sort, so I highly recommend that you do the same:

COMMAND-

$ sudo pip install virtualenv virtualenvwrapper

$ sudo rm -rf ~/.cache/pip

Now that both virtualenv and virtualenvwrapper have been installed, we need to update our ~/.profile file. include the following lines at the bottom of the file: Raspbian Stretch

COMMAND-

$ nano ~/.profile

Copy & paste the following lines lines at the bottom of the file:

COMMAND-

# virtualenv and virtualenvwrapper

WORKON_HOME=$HOME/.virtualenvs

source /usr/local/bin/virtualenvwrapper.sh

OR

You should simply use cat and output redirection to handle updating ~/.profile :

COMMAND-

$ echo -e “\n# virtualenv and virtualenvwrapper” >> ~/.profile

$ echo “exportWORKON_HOME=$HOME/.virtualenvs” >> ~/.profile

$ echo “source /usr/local/bin/virtualenvwrapper.sh” >> ~/.profile

Now that we have our ~/.profile updated, we need to reload it to make sure the changes take affect. You can force a reload of your ~/.profile file by: Logging out and then logging back in.

Closing a terminal instance and opening up a new one

Or my personal favourite

COMMAND-

$ source ~/.profile

Note: I recommend running the source ~/.profile file each time you open up a new terminal to ensure your system variables have been setup correctly. Creating your Python virtual environment Next, let’s create the Python virtual environment that we’ll use for computer vision development:

COMMAND-

$ mkvirtualenv cv -p python2

This command will create a new Python virtual environment named cv using Python 2.7.

If you instead want to use Python 3, you’ll want to use this command instead:

COMMAND-

$ mkvirtualenv cv -p python3

Again, I can’t stress this point enough: the cv Python virtual environment is entirely independent and sequestered from the default Python version included in the download of Raspbian Stretch.

Any Python packages in the global site-packages directory will not be available to the cv virtual environment. Similarly, any Python packages installed in site-packages of cv will not be available to the global install of Python.

Keep this in mind when you’re working in your Python virtual environment and it will help avoid a lot of confusion and headaches. How to check if you’re in the “cv” virtual environment If you ever reboot your Raspberry Pi; log out and log back in; or open up a new terminal, you’ll need to use the workon command to re-access the cv virtual environment.

In previous blog posts, I’ve seen readers use the mkvirtualenv command — this is entirely unneeded! Themkvirtualenv command is meant to be executed only once: to actually create the virtual environment. After that, you can use workon and you’ll be dropped down into your virtual environment:

COMMAND-

$ source ~/.profile

$ workon cv

To validate and ensure you are in the cv virtual environment, examine your command line — if you see the text (cv) preceding your prompt, then you are in the cv virtual environment: Make sure you see the “(cv)” text on your prompt, indicating that you are in the cv virtual environment.

Otherwise, if you do not see the (cv) text, then you are not in the cv virtual environment:

If you do not see the “(cv)” text on your prompt, then you are not in the cv virtual environment and need to run “source” and “workon” to resolve this issue. To fix this, simply execute the source and workon commands mentioned above. Installing NumPy on your Raspberry Pi Assuming you’ve made it this far, you should now be in the cv virtual environment (which you should stay in for the rest of this tutorial).

Step #4 : Installing NumPy on your Raspberry Pi

Our only Python dependency is NumPy, a Python package used for numerical processing:

COMMAND-

$ pip install numpy

the NumPy installation can take a bit of time.

Step #5: Compile and Install OpenCV

COMMAND-

$ workon cv

Once you have ensured you are in the cv virtual environment, we can setup our build using CMake:

COMMAND-

$ cd ~/opencv-3.3.0/ $ mkdir build $ cd build $ cmake -D CMAKE_BUILD_TYPE=RELEASE \ -D CMAKE_INSTALL_PREFIX=/usr/local \ -D INSTALL_PYTHON_EXAMPLES=ON \ -D OPENCV_EXTRA_MODULES_PATH=~/opencv_contrib-3.3.0/modules \ -D BUILD_EXAMPLES=ON ..

Now, before we move on to the actual compilation step, make sure you examine the output of CMake! Start by scrolling down the section titled Python 2 and Python 3 . If you are compiling OpenCV 3 for Python 2.7, then make sure your Python 2 section includes valid paths to the Interpreter, Libraries, numpy and packages

Checking that Python 3 will be used when compiling OpenCV 3 for Raspbian Stretch on the Raspberry Pi 3. Notice how the Interpreter points to our python2.7 binary located in the cv virtual environment. The numpy variable also points to the NumPy installation in the cv environment.

Again, the Interpreter points to our python3.5 binary located in the cv virtual environment while numpy points to our NumPy install.

In either case, if you do not see the cv virtual environment in these variables paths, it’s almost certainly because you are NOT in the cv virtual environment prior to running CMake! If this is the case, access the cv virtual environment using workon cv and re-run the cmake command outlined above.

Configure your swap space size before compiling Before you start the compile process, you should increase your swap space size. This enables OpenCV to compile with all four cores of the Raspberry PI without the compile hanging due to memory problems.

Open your /etc/dphys-swapfile and then edit the CONF_SWAPSIZE variable

COMMAND-

$ nano /etc/dphys-swapfile

and then edit the following section of the file: #set size to absolute value, leaving empty (default) then uses computed value # you most likely don't want this, unless you have an special disk situation.

Notice that I’ve commented out the 100MB line and added a 1024MB line. This is the secret to getting compiling with multiple cores on the Raspbian Stretch. If you skip this step, OpenCV might not compile.

# CONF_SWAPSIZE=100

CONF_SWAPSIZE =1024

To activate the new swap space, restart the swap service:

COMMAND-

$ sudo /etc/init.d/dphys-swapfile stop

$ sudo /etc/init.d/dphys-swapfile start

Note: It is possible to burn out the Raspberry Pi microSD card because flash memory has a limited number of writes until the card won’t work. It is highly recommended that you change this setting back to the default when you are done compiling and testing the install (see below). To read more about swap sizes corrupting memory, see this page. Finally, we are now ready to compile OpenCV:

COMMAND-

$ make -j4

The -j4 switch stands for the number of cores to use when compiling OpenCV. Since we are using a Raspberry Pi 2, we’ll leverage all four cores of the processor for a faster compilation.

However, if your make command errors out, I would suggest starting the compilation over again and only using one core

Read More Info..

Octopod: Smart IoT Home/Industry Automation Project