In this post, you are going to learn about how to build a Raspberry Pi pan tilt face tracker using OpenCV. We will write the code to make it work for known as well as unknown faces. For known faces, we will have to train the recognizer first.

To control the servos, I have used pigpio module instead of RPi.GPIO library (which is the most commonly used) because I find servos jittering while controlling them using RPi.GPIO.

Servos work smoothly while using the pigpio module and there is no jittering.

Required Components

The components you are going to require for Raspberry Pi pan tilt face tracker using OpenCV are as follows

- Raspberry Pi (I have used Raspberry Pi 3B+)

- Power supply

- PiCamera (PiCamera V2 is recommended)

- Pan tilt bracket with servos

- Touch Screen

- Keyboard Mouse

Pan Tilt Assembly

To assemble pan tilt bracket, watch following video by Amp Toad

After assembling it, place the camera on it using the mounting tape.

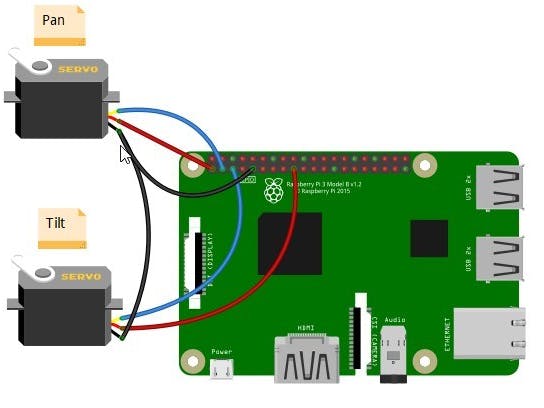

Circuit Diagram

The connections are very easier. Connect the black/ brown and red wire of servos to GND and 3.3V pin of Raspberry Pi respectively. Then connect the yellow wire of Pan servo to GPIO 2 of Raspberry Pi and yellow wire of Tilt servo to GPIO 3.

Now let’s move towards the code part.

Code

The first code we are going to write will track the known or unknown face depending on if we run it for trained data or not. To make it work for the trained data, we will have to train the recognizer which we will do in the next step.

You can download the project folder from following link.

RaspberryPiPanTiltFaceTrackingOpenCVDownload

Now let’s write the code.

Pan Tilt Face Tracking code

First of all, we included the packages required for this project.

import cv2

from picamera.array import PiRGBArray

from picamera import PiCamera

import numpy as np

import pickle

import RPi.GPIO as GPIO

import pigpio

from time import sleep

from numpy import interp

import argparseWe parse our command line argument which is optional. If we will pass ‘-t y’ or’–trained y’, it will work for trained data (We will write the code to train data in the next step) otherwise it will work for every face.

args = argparse.ArgumentParser()

args.add_argument('-t', '--trained', default='n')

args = args.parse_args()

if args.trained == 'y':

recognizer = cv2.face.LBPHFaceRecognizer_create()

recognizer.read("trainer.yml")

with open('labels', 'rb') as f:

dicti = pickle.load(f)

f.close()In the next lines, we initialized the pins for servos and moved the servos to centre position. minMov and maxMov is how much our servos will pan or tilt based on how far face is detected or recognized from the centre position.

panServo = 2

tiltServo = 3

panPos = 1250

tiltPos = 1250

servo = pigpio.pi()

servo.set_servo_pulsewidth(panServo, panPos)

servo.set_servo_pulsewidth(tiltServo, tiltPos)

minMov = 30

maxMov = 100Then we initialized the camera object that will allow us to play with the pi camera. We set the resolution at (640, 480).

PiRGBArray() gives us a 3-dimensional RGB array organized(rows, columns, colors) from an unencoded RGB capture. PiRGBArray’s advantage is its ability to read the frames from Raspberry Pi camera as NumPy arrays making it compatible with OpenCV. It avoids the conversion from JPEG format to OpenCV format which would slow our process.

It takes two arguments:

- The camera object

- The resolution

camera = PiCamera()

camera.resolution = (640, 480)

rawCapture = PiRGBArray(camera, size=(640, 480))Load a cascade file for detecting faces.

faceCascade = cv2.CascadeClassifier("haarcascade_frontalface_default.xml")After that, we use the capture_continuous function to start reading the frames from the Raspberry Pi camera module.

The capture_continuous function takes three arguments:

- rawCapture

- The format in which we want to read each frame since OpenCV expects the image to be in the BGR format rather than the RGB so we specify the format to be BGR.

- The use_video_port boolean, making it true means that we are treating a stream as video.

for frame in camera.capture_continuous(rawCapture, format="bgr", use_video_port=True):Once we have the frame, we can access the raw NumPy array via the .array attribute. After accessing, we convert this frame to grayscale.

frame = frame.array

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)Then we call our classifier function to detect faces in the frame. The first argument we pass is the grayscale image. The second argument is the parameter specifying how much the image size is reduced at each image scale. The third argument is a parameter specifying how many neighbors each candidate rectangle should have to retain it. A higher number gives lower false positives.

faces = faceCascade.detectMultiScale(gray, scaleFactor = 1.5, minNeighbors = 5)If any faces are there, we will check if the code is run for trained data or not. If it is for trained data, then we will extract the face region and use the recognizer to recognize the image. The recognizer will give us our label ID and confidence. We looked into the dictionary for the name assigned to this label ID.

for (x, y, w, h) in faces:

if args.trained == "y":

roiGray = gray[y:y+h, x:x+w]

id_, conf = recognizer.predict(roiGray)

conf = int(conf)

for name, value in dicti.items():

if value == id_:

print(name)We then checked whether we have enough confidence for this face. If the confidence is less than 70, it will call the movePanTilt() function.

If the code is not run for trained data, it will just call the movePanTilt() function instead of recognizing the face.

if conf < 70:

cv2.putText(frame, name + str(conf), (x, y), cv2.FONT_HERSHEY_SIMPLEX, 2, (0, 0 ,255), 2,cv2.LINE_AA)

movePanTilt(x, y, w, h)

else:

movePanTilt(x, y, w, h)In the movePanTilt() function, we created a rectangle around the face in the original image. Then we checked if the face is at the center of the frame or not.

int(x+(w/2)) > 360 means face is on the right side of the frame and int(x+(w/2)) < 280 means face is on the left side of the frame.

We calculated the distance that pan tilt servos will go for. Face far away from centre means servos will cover more distance and face near the centre means servos will go for less distance.

If the pan and tilt servos position will be in 0 to 180 degrees (500=0 degree and 2500=180 degree). Servos will move to that position otherwise these will stay in the current position.

def movePanTilt(x, y, w, h):

global panPos

global tiltPos

cv2.rectangle(frame, (x, y), (x+w, y+h), (0, 255, 0), 2)

if int(x+(w/2)) > 360:

panPos = int(panPos - interp(int(x+(w/2)), (360, 640), (minMov, maxMov)))

elif int(x+(w/2)) < 280:

panPos = int(panPos + interp(int(x+(w/2)), (280, 0), (minMov, maxMov)))

if int(y+(h/2)) > 280:

tiltPos = int(tiltPos + interp(int(y+(h/2)), (280, 480), (minMov, maxMov)))

elif int(y+(h/2)) < 200:

tiltPos = int(tiltPos - interp(int(y+(h/2)), (200, 0), (minMov, maxMov)))

if not panPos > 2500 or not panPos < 500:

servo.set_servo_pulsewidth(panServo, panPos)

if not tiltPos > 2500 or tiltPos < 500:

servo.set_servo_pulsewidth(tiltServo, tiltPos)Complete code for pan tilt face tracking is as follow

#pigpio module for servo instead of RPi.GPIO in Raspberry pi avoids jittering.

import cv2

from picamera.array import PiRGBArray

from picamera import PiCamera

import numpy as np

import pickle

import RPi.GPIO as GPIO

import pigpio

from time import sleep

from numpy import interp

import argparse

args = argparse.ArgumentParser()

args.add_argument('-t', '--trained', default='n')

args = args.parse_args()

if args.trained == 'y':

recognizer = cv2.face.LBPHFaceRecognizer_create()

recognizer.read("trainer.yml")

with open('labels', 'rb') as f:

dicti = pickle.load(f)

f.close()

panServo = 2

tiltServo = 3

panPos = 1250

tiltPos = 1250

servo = pigpio.pi()

servo.set_servo_pulsewidth(panServo, panPos)

servo.set_servo_pulsewidth(tiltServo, tiltPos)

minMov = 30

maxMov = 100

camera = PiCamera()

camera.resolution = (640, 480)

rawCapture = PiRGBArray(camera, size=(640, 480))

faceCascade = cv2.CascadeClassifier("haarcascade_frontalface_default.xml")

def movePanTilt(x, y, w, h):

global panPos

global tiltPos

cv2.rectangle(frame, (x, y), (x+w, y+h), (0, 255, 0), 2)

if int(x+(w/2)) > 360:

panPos = int(panPos - interp(int(x+(w/2)), (360, 640), (minMov, maxMov)))

elif int(x+(w/2)) < 280:

panPos = int(panPos + interp(int(x+(w/2)), (280, 0), (minMov, maxMov)))

if int(y+(h/2)) > 280:

tiltPos = int(tiltPos + interp(int(y+(h/2)), (280, 480), (minMov, maxMov)))

elif int(y+(h/2)) < 200:

tiltPos = int(tiltPos - interp(int(y+(h/2)), (200, 0), (minMov, maxMov)))

if not panPos > 2500 or not panPos < 500:

servo.set_servo_pulsewidth(panServo, panPos)

if not tiltPos > 2500 or tiltPos < 500:

servo.set_servo_pulsewidth(tiltServo, tiltPos)

for frame in camera.capture_continuous(rawCapture, format="bgr", use_video_port=True):

frame = frame.array

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

faces = faceCascade.detectMultiScale(gray, scaleFactor = 1.5, minNeighbors = 5)

for (x, y, w, h) in faces:

if args.trained == "y":

roiGray = gray[y:y+h, x:x+w]

id_, conf = recognizer.predict(roiGray)

conf = int(conf)

for name, value in dicti.items():

if value == id_:

print(name)

if conf < 70:

cv2.putText(frame, name + str(conf), (x, y), cv2.FONT_HERSHEY_SIMPLEX, 2, (0, 0 ,255), 2,cv2.LINE_AA)

movePanTilt(x, y, w, h)

else:

movePanTilt(x, y, w, h)

cv2.imshow('frame', frame)

key = cv2.waitKey(1)

rawCapture.truncate(0)

if key == 27:

break

cv2.destroyAllWindows()Before running the code, you need to turn on the pigpio daemon.

sudo pigpiodType following command to run it for every face.

python3 facetrack.pyTo run it only for known faces, you will have to type following command but you will have to first train the recognizer for that face which we are going to do in the next step.

python3 facetrack.py -t yFace Detect Train

The first task is to gather the data for which we are going to train our recognizer. We will write a python code that will take 30 images of face using OpenCV pre-trained classifier.

OpenCV already contains many pre-trained classifiers for face, eyes, smile, etc. The classifier we are going to use will detect faces and the cascade file is available on GitHub.

Save this file in the working directory as “haarcascade_frontalface_default.xml”.

Now let’s write the code. First, we imported the required package.

import os

import cv2

from picamera.array import PiRGBArray

from picamera import PiCamera

import numpy as np

from PIL import Image

import pickle

import argparseThen we initialize the camera object and set resolution at (640, 480) as we did for the last code.

camera = PiCamera()

camera.resolution = (640, 480)

rawCapture = PiRGBArray(camera, size=(640, 480))

faceCascade = cv2.CascadeClassifier("haarcascade_frontalface_default.xml")We parse our command line argument which is required. This command line argument is the name of the person for which we are going to train our recognizer. If we will pass ‘-n Nerd’ or’–name Nerd’, it will create a directory of name Nerd and will set to store images in it. If there is already a directory with the name Nerd and we pass this name again, it will add set the directory to add more images in it.

args = argparse.ArgumentParser()

args.add_argument('-n', '--name', required = True)

args = args.parse_args()

name = args.name

dirName = "./images/" + name

if not os.path.exists(dirName):

os.makedirs(dirName)

print("Directory Created")

count = 1

maxCount = 30

else:

print("Name already exists, Adding more images")

count = (len([name for name in os.listdir(dirName) if os.path.isfile(os.path.join(dirName, name))])) + 1

maxCount = count + 29After that, we use the capture_continuous function to start reading the frames from the Raspberry Pi camera module. It will check if it has taken 30 images of face or not. If 30 images are not completed, it will continue to take images.

for frame in camera.capture_continuous(rawCapture, format="bgr", use_video_port=True):

if count > maxCount:

breakThen we accessed the frame and converted it to grayscale. We call our classifier function to detect faces in the frame. If any face is detected, we extracted the face region and saved it in the directory we created at the start of code.

Now we can train the recognizer according to the data we gathered in the previous step.

We will use the LBPH (LOCAL BINARY PATTERNS HISTOGRAMS) face recognizer, included on the OpenCV package. We load it in the following line:

We get the path of the current working directory and we move to the directory where the image directories are present.

baseDir = os.path.dirname(os.path.abspath(__file__))

imageDir = os.path.join(baseDir, "images")Then we move into each image directory and look for the images. If the image is present, we convert it into the NumPy array.

After that, we perform the face detection again to make sure we have the right images and then we prepare the training data.

Store the dictionary which contains the directory names and label IDs.

for root, dirs, files in os.walk(imageDir):

for file in files:

print('.', end='', flush=True)

if file.endswith("png") or file.endswith("jpg"):

path = os.path.join(root, file)

label = os.path.basename(root)

if not label in labelIds:

labelIds[label] = currentId

currentId += 1

id_ = labelIds[label]

pilImage = Image.open(path).convert("L")

imageArray = np.array(pilImage, "uint8")

faces = faceCascade.detectMultiScale(imageArray, scaleFactor=1.1, minNeighbors=5)

for (x, y, w, h) in faces:

roi = imageArray[y:y+h, x:x+w]

xTrain.append(roi)

yLabels.append(id_)

with open("labels", "wb") as f:

pickle.dump(labelIds, f)

f.close()Now train the data and save the file.

recognizer.train(xTrain, np.array(yLabels))

recognizer.save("trainer.yml")This code creates a trainer.yml and labels files that we use in the recognition code.

Type following command to run the code. Command line argument passed is the name of the person for which it is going to train the recognizer.

python3 faceDetecTrack.py -n NerdPCB Design

After making sure everything works fine, I have designed the PCB on KiCad.