8 GB RAM 32 GB eMMC Raspberry Pi CM development board with touchscreen and plenty of interfaces.

Story

Last few articles I published were about TinyML with Wio Terminal – a Cortex M4F based development board with an LCD screen in sturdy plastic case. Seeed studio, the company made Wio Terminal decided to take this idea a step further and recently announced reTerminal – a Raspberry Pi 4 Compute Module based development board with an LCD screen in sturdy plastic case.

I got my hands on one of the reTerminals and have made a brief unboxing video, which also included a few demos and an explanation of possible use cases for the device. The article is means as a supplement for the video, expanding on how to set up the environment and run the machine learning demos.

Specifications

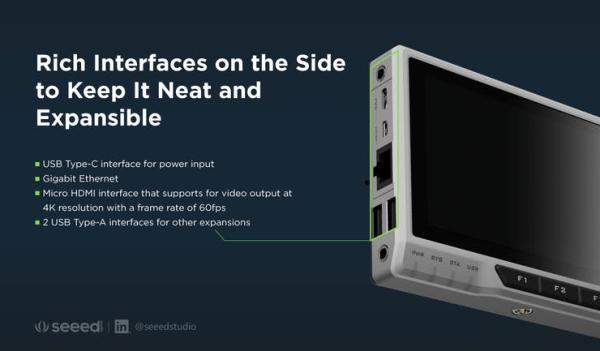

reTerminal is powered by a Raspberry Pi Compute Module 4 (CM4) with Quad-core Cortex-A72 CPU running at 1.5GHz. CM4 module version used for reTerminal has 4 Gb of RAM and 32 Gb of eMMC storage, which shortens boot-up times and gives smoother overall user experience. Peripherals-wise, there is a 5-inch IPS capacitive multi-touch screen with a resolution of 1280 x 720, accelerometer, RTC module, buzzer, 4 buttons, 4 LEDs and a light sensor. And for connectivity, the new board has dual-band 2.4GHz/5GHz Wi-Fi and Bluetooth 5.0 BLE, plus Gigabit Ethernet port on the side.

reTerminal can be powered by the same power supply used for Raspberry Pi 4, 5V2A, however in the official description 4A power supply is recommended, especially when you connect more peripherals. For the demos I have used a run of the mill unknown company 5V2A wall plug power supply and didn't get under-voltage warning. Having said that, if in doubt, use 5V4A.

By default reTerminals ship with pre-installed 32-bit Raspbian OS, with devices drivers installed. However, since for machine learning applications, a 64-bit OS can give a significant boost, Seeed studio will also provide a 64-bit version of the Raspbian OS image with reTerminal-specific drivers pre-installed.

Onboard screen keyboard and a simple QT5 demo are included as well. Touchscreen is responsive, but since Raspbian OS is not mobile operating system and not optimized for touchscreens it sometimes can be a bit troublesome to press on smaller UI elements. Having a stylus helps a lot.

Onboard screen keyboard pops up when you need to type the text and disappears after that. You can modify that behavior in the settings. So it is possible to use reTerminal as portable Raspberry Pi, although for that you might want to have a look at another OS, for example Ubuntu touch, which works with Raspberry Pi 4, but currently is in beta development stage and highly experimental. The main use case for reTerminal is displaying user interfaces made with QT, LVGL or Flutter. Let’s launch a sample QT application, that shows device specifications and parameters, data from sensors and example control board for imaginary factory. When interface elements are large, the touch screen is much pleasant to use.

Edge Impulse Object Detection

We’re going to make use of latest feature of Edge Impulse development platform, Linux deployment support. We can easily train an object detection model by collecting samples with camera connected to reTerminal, then train in the cloud and automatically download and run the trained model with edge-impulse-linux-runner.

The installation procedure of Edge Impulse CLI is described in documentation. It all comes down to a few simple steps:

curl -sL https://deb.nodesource.com/setup_12.x | sudo bash -

sudo apt install -y gcc g++ make build-essential nodejs sox gstreamer1.0-tools gstreamer1.0-plugins-good gstreamer1.0-plugins-base gstreamer1.0-plugins-base-apps

npm config set user root && sudo npm install edge-impulse-linux -g --unsafe-perm

After Edge Impulse CLI is installed, make sure you have the camera connected – I used a simple USB Web camera, if you use Raspberry Pi camera, remember to enable it in raspi-config.

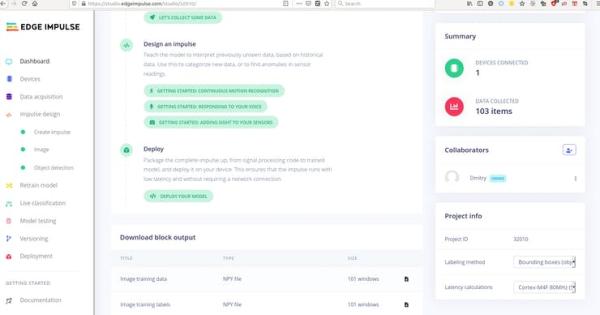

Before you start collecting the data for object detection, make sure that in Dashboard under ‘Project info > Labeling method' ‘Bounding boxes (object detection)' is selected.

Take at least 100 images for each class you want to recognize. Currently you can upload your own images, by pressing on Show options – Upload data in Data Acquisition tab. But it's not possible to upload the bounding boxes annotations yet, so the images you upload will still need to be manually labeled. Aftr you have enough images annotated, go to Create Impulse, choose Image for processing block and Object Detection(Images) for Learning block.

The amount of images one user can collect and annotate is not nearly enough to train a large network from scratch, that's why we fine-tune a pre-trained model to detect new classes of objects. In most of cases you can leave the default values for number of epochs, learning rate and confidence. For object detection, a custom code is used, so we cannot tweak it in Expert mode, as it is possible with simpler models.

Training is done on CPU, so it takes a bit of time, depending on number of images in your dataset. Have a cup of your favorite beverage, while you at it.

One of the best things about newly added Linux support for Edge Impulse is the edge-impulse-linux-runner. When the model training is finished and you're satisfied with accuracy on validation dataset (which is automatically split from training data), you can test the model in Live classification and then go on to deploying it on the device. In this case it is as simple as running

edge-impulse-linux-runner

in terminal. The model will be automatically downloaded and prepared, then the inference result will be displayed in browser, you will a line on your terminal, similar to:

Want to see a feed of the camera and live classification in your browser? Go to http://192.168.1.19:4912

Click on the link in your terminal to see the camera live view.

The backbone model used for transfer learning is MobileNetv2 SSD and is quite large, so even with all the optimizations we get about 2 FPS or ~400 ms. for a frame – the video stream looks quite responsive, but that’s because inference is not performed on every frame, you can clearly see that if you detect an object and then it disappears from the image, the bounding box for it stays on for some time. Since Linux support is a very new feature in Edge Impulse, I'm sure it will receive a lot of improvements in the near future, allowing for faster inference and upload of user-annotated data.

ARM NN Accelerated Inference

While we know that Raspberry Pi 4 is not the best board for machine learning inference, since it doesn’t have any hardware accelerator for that, we still can achieve higher than real time inference speed by

a) using smaller mode

b) making sure we utilize all 4 cores and Single Instruction Multiple Data (SIMD) instructions, where multiple processing elements in the pipeline perform operations on multiple data points simultaneously, available with Neon optimization architecture extension for Arm processors.

Source: reTerminal Machine Learning Demos (Edge Impulse and Arm NN)