FIRST: I used a translator to help me , because I ‘m not fluent in English ,I apologize for the bad english . My intention really is to collaborate .

SECOND: My thanks to you I got an award in the “MICROCONTROLLER CONTEST SPONSORED BY RADIOSHACK” !!! I want to thank you very much !!!! What I can help with this project all I'm here to explain! Thank you and let's learn! : D

This project describes the creation of a mobile robot that uses a computer vision system for guidance. The main objective of this project is to demonstrate that it is possible to use the artificial vision for a robot to interact with the external environment, using a feature such as shape, color or texture. This characteristic is used as a metric to determine the movement of the entire robot.

Robotics is a branch of research that is becoming crucial to support human activities, with the development of robots that guarantee reliability, range, speed and security which they are applied. In most of these applications the robot interprets the outside environment through the perception, that is, by recognizing information using artificial receptors, this enables the system to have a sense element which can recognize a characteristic such as color, shape or texture through a system of Computer Vision.

If you want to learn a little about how it was done this robot, from the choice of technologies to drive, stay with me and let's work together!

Enjoy!

Step 1: What is my idea?

The idea to create this project, came up with a question: “Is it possible to see the computer and perform a task alone?” Before this doubt I joined an area that I search for some time: “robotics” and I drew up a question still more complete: “is it possible to get around a mobile robot independently through an artificial vision?”. And another question: “how will I know what is happening and if the robot is performing the functions according to what was planned?” SIMPLE! I thought of creating an interface and remotely access the Raspberry Pi and view everything that is happening quickly and you do not have much computational cost. That was a good catch! It was these questions that I have elaborated before the entire context and started my research all involving this project.

I learned that in computer vision, the task of segmentation of color has a very low computational cost, and so I chose this task and this kind of feature can be implemented in many programming languages and these languages depending on the platform by the way accept robotic resources . And then it was only to choose the key technologies that the entire system would be implemented and then: get to work!

Step 2: Main technologies of the project

Before starting any project, it was Necessary to know and learn about the key technologies que Were chosen:

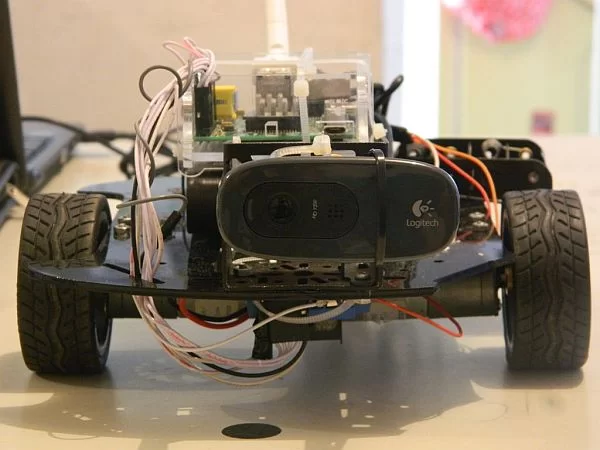

- Raspberry Pi: was studied ways to apply computer vision in any computer that could be small scale, he could use robotic chassis. Then the microcomputer was chosen Raspberry Pi Model B. The Raspberry Pi has an acceptable cost, small size and specs (clock, CPU, RAM, and other ETHERNET). The Raspberry Pi is capable of embarking peripherals such as USB ports and also the ability to integrate features such as actuators and sensors in a set called external links GPIO, including pins outputs / digital inputs, UART, I2C, SPI, audio, 3v, 5v and GND.

- OpenCV: the OpenCV library is a computer vision library chosen. The library is important for the recognition of the object by using a characteristic such as color, texture or form. This is where operations, functions and features that capture the image and make the processing of information of interest to the project directly assisting in other parts of the system for decision-making are performed.

- Python:to describe the source code was used the programming language Python, the programming language chosen for the system was the Python 2, based on compatibility with the Raspberry Pi and the OpenCV library. Python has some characteristics and features such as being a high-level language, present unique usability, disposal of high-level types (integer, boolean) control blocks by indentation and possess native libraries for Python that support the development of the project, as NumPy , Pygame, Matplotlib and SciPy. For the Raspberry Pi, is the version 3.2.3 of Python and its development environment is the 3 IDLE scripts where able to use the library computer vision system to conduct perception of the environment and extract information that will be developed will be used in decision-making system. The script will find the settings for handling, settings, functions and libraries.

And from this information technology has been able to start and develop a project that could …

Step 3: What is a Computer Vision System?

The goal of computer vision is to enable the artificial means, such as computers, having the ability to sense the external environment, can understand it, take appropriate measures or decisions, learn from this experience so they can improve their future performance.

An artificial vision system is a reflection of the natural vision system, it is possible to achieve, for example, in nature, tracing certain targets such as predators, food and even objects that may be in the path of an individual through the vision and learning. Thus, a major goal of an image is to inform the viewer of your content, allowing it to make decisions. An important subtask in this computer vision system is image segmentation, which is the process of dividing the image into a set of individual regions, segments or number. Which consists of partitioning an image into meaningful regions, grouping them before seeking a common characteristic, such as color, edge and shape.

Color segmentation uses color to classify areas in the image separating objects that do not have the same characteristic color. It is common for a computer vision system aims to make the reconstruction of the external environment, specifically, the objects in which the first goal is to achieve the object location with certainty and reliability. The color of the object is a feature used to separate different areas and subsequently enable the use of a tracking module. So this feature was chosen to be studied and implemented.

Lets gooooooooo learnnnnnnnnn!

Step 4: Materials used in the project

I chose some pieces that I already had and others that got to help finish the project:

– Raspberry Pi Model B + 16GB SD card class 10

– 2 DC Motors – 6V

– Robotic chassis (old)

– H Bridge (L298N model) for motor control up to 2

– EDUP Wireless adapter (ralink chipset)

– Power Bank 1A / 15k mAh

– Logitech C270 HD Camera

– 9g Servo Motor

– Support Pan-tilt steel

– Jumpers (M-M, M-F and F-F)

– 4 AA 1.5V batteries mAh 6k

– Cables plastic

– Sinks and thermal paste

– A lot of persistence and patience xD

Step 5: Starting the Raspberry Pi

There are some operating systems geared to the Raspberry Pi as Pidora, Raspbian, NOOBS, ARCH LINUX and the Raspberry Pi contains no ROM for archiving and OS installation, it is necessary to use a bootable SD card with this operating system on it, the transmission speed of the card directly influence the processing of the operating system, preferably cards that have a higher rate of 2 Mb / s recording is required. The card being used with the OS is a class 10 SDHC card that can reach speed of 45MB /s.

The operating system chosen for this project is the RASPBIAN – Debian Wheezy, it comes with over 35,000 packages, precompiled software bundled in a nice format for easy installation on your Raspberry Pi and can be downloaded at oficial site.

Click here if you want more information about your setup and preparation of the card.

If you want information from the first configuration of the Raspberry Pi, click here.

- THE CAMERA

The system chosen for the webcam is auto detected after plugging in the USB port on the Raspberry Pi. Use the lsusb command and will be shown the detection of peripheral. For viewing webcam streaming software, which is a way to transmit multimedia data via packets temporarily stored in the cache Raspberry Pi is required. The streaming software has been installed: mjpeg-streamer in Raspeberry Pi through the commands in this site.

After meeting some settings Raspberry Pi, we now understand more things about the communication used?

Step 6: System Communication

The connectivity of the system can be done in two ways: wired (Ethernet LAN connection 10 / 1mg) and wireless (connection by a USB wireless adapter). The choice was the wireless communication in order to allow mobility to the system. Before installing the wireless adapter is necessary to know some of your information such as your Service Set Identifier (SSID), which is the set of characters that identifies a wireless network, also know the type of encryption used on the network, the network type wireless connection and the adapter to be addressed by the system and functioning properly, you must install your firmware, a package of software available as model the internal chip adapter.

For choosing a study based on wireless adapters compatible with the Raspberry Pi was done through the official website. The adapter was chosen to model theEDUP RALINK 5370 chip with a frequency of 2 dBi antenna, it was chosen to have a reasonable range of the access point and provide easy installation.

To make remote communication in order to access information, upload / download files and perform necessary testing was all computers belong to the same network, that is, both computers to be connected to an access point. And to accomplish these tasks some communication protocols were used:

- GUI – GRAPHIC USER INTERFACE

To remotely access a GUI on Raspberry PI was required an RDP or VNC protocol and a connection encrypts good quality.

- Access via VNC protocol: it is necessary to install the software on your computer and UVCViewer the Raspberry Pi that TightVNC is a free suite of remote control software has been installed.

Server installation: sudo apt-get install tightvncserver

Startup: tightvncserver

It creates a default session: vncserver :1 -geometry 1024×728 -depth 24

- Access via RDP protocol: it is necessary to install the software andRDPDesk Raspberry Pi XRDP server that automatically starts the boot was installed.

Server installation: sudo apt-get install xrdp

- COMMAND LINE

Access via the command line was necessary to perform maintenance, upgrades and scripts for command lines which is quite practical. In the remote access machine Putty software that creates an SSH access protocol using IP access to the Raspberry Pi was installed and it performs the installation of the service only once..

Server installation: sudo apt-get install ssh

- FILE TRANSFER

All previous communication protocols are limited in the direct transfer of files, so an option is found via FTP protocol, is the standard of the existing TCP / IP oriented to transfer files, it is independent of operating system or hardware. Is all important for performing analysis of scripts and data exchange with the Raspberry Pi, for it was used in WinSCP access computer software along with IP destination to exchange files.

After configuring the media, the process of creating scripts for computer vision and robotic integration of resources was initiated.

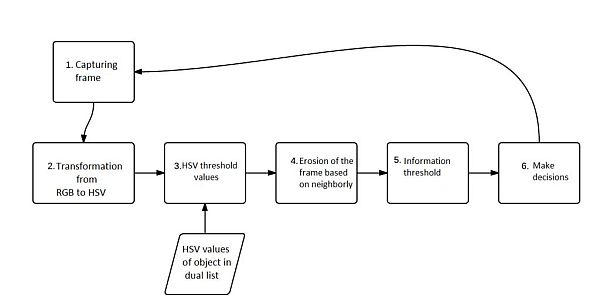

Step 7: Creating and configuring the Computer Vision System (CVS)

The OpenCV library is where all the processing operations of the video is done and the information extracted from this processing is done making decisions robotics platform and other components that enable the dynamic system. His version is the OpenCV-2.2.8 being present examples and all the functions available in versions for Windows, MAC and Linux.

It was necessary to do the installation with the command:

Update the system: sudo apt-get update

Install updates: sudo apt-get upgrade

Install the OpenCV library: sudo apt-get install python-opencv

For more detail: The RR.O.P. – RaspRobot OpenCV Project