Introduction

We strongly wish our product will help you. The main requirements from our clients are

detecting the fire and transmitting the images/videos to the ground station wirelessly. Moreover,

these two parts should be real-time.

We are pleased that you have chosen Wildfire Drone for your business needs. There is a strong

need for the fire detection algorithm and wireless communication model, as evidenced by the fire

detection and images/videos transmission. We provide for you here a powerful system for fire

identification model via deep learning algorithm and the QPSK wireless communication system

has been custom-designed to meet your needs. Some of the key highlights include:

● 93% fire identification accuracy for real-time image analysis

● Fire identification model has a powerful ability to keep the accuracy even there is a

strong noise

● Fire segmentation algorithm could circle the fire correctly based on the thermal images

even the environment temperature is close to the fire

● The wireless communication system we designed is not just a simple transmitter &

receiver, it also assembled with the error correction and the noise reduction parts. After

multiple tests and modifications, finally it can transmit our images data in an accurate

mode.

The purpose of this user manual is to help you, the client, successfully use and

maintain the Wildfire Drone product in your actual business context going

forward. Our aim is to make sure that you are able to benefit from our

product for many years to come!

Based on the statistics result from the National Interagency Fire Center (NIFC), wildres exhaust

10 million acres of land in 2016 and brought $6 billion irrecoverable damages from 1995 to 2014

in the United States. Wildres not only impact the wildlife, but more importantly endanger

human lives. Therefore, early detection of wildres before they get out of control is an urgent

requirement. Wildres are often initiated in remote forest areas where the common re detection

methods such as lookout tower stations fail to detect such res in a timely manner. Moreover,

conventional detection approaches can barely provide sufcient re information about the

precise re locations, the orientation of re expansion, etc. To detect forest res, there are two

general approaches using satellite images, and sensor networks. However, the satellites cannot

provide real-time video or images since the quality of their images is highly impacted by weather

conditions. Fire detection using wireless sensor networks is costly and high maintenance to cover

wide forest areas. Manned aircraft can precisely survey a wide area in a short amount of time,

however, this solution is costly and will endanger the life of pilots due to the high-temperature

airow and thick smoke.

Unmanned Aerial Vehicles (UAV) have been recently utilized in wildre detection and

management as a low-cost and agile solution to collect data/imagery considering their unique

features such as 3-dimensional movements, easy to y, maneuverability and exibility. The

UAV networks can offer several features in such operations including tracking the re front line,

fast mapping of wide areas and damage assessment, real-time video streaming, and

search-and-rescue. After this, the wireless communication could give us a chance to double

check the images/videos so that the ground station could give the fastest and accurate

information to the fire fighter.

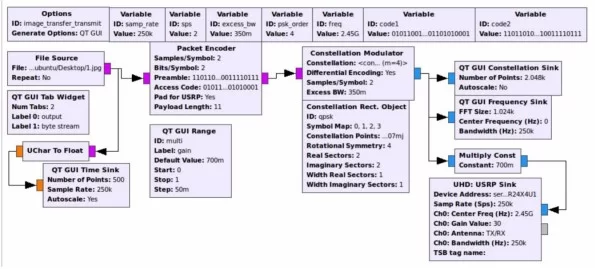

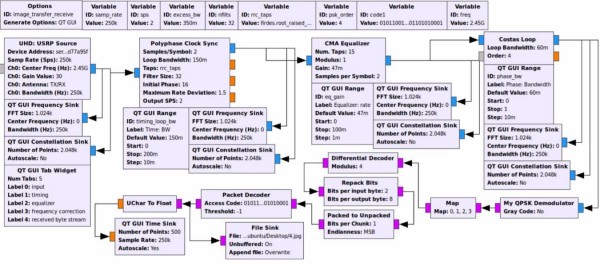

Subsystem 1 – wireless communication

Overview

For the subunit who is in charge of the wireless communication part of the project, during our

previous work, we have already designed and built the corresponding function blocks on the

transmitter side and the receiver side using the software GNU RADIO, which means we have

finished the basic goal of our project. However, due to the COVID-19, according to the

suggestion of professor Afghah and our client, we need to stay at our dormitory to finish the

remaining part, which includes the simulation of the whole transmission process. Unfortunately,

the computers of our members are installed with the Windows system, but the software needs the

Linux environment. In that case, we are now trying to install the virtual machine with the Linux

system and test our function blocks on our own computer. In my view, the challenges in future

may be the simulation of transmitting and receiving through a simulated channel. To realize the

simulation, we should build a simulated wireless channel with practical characteristics, which

means we need to consider the complex environment in practice where we process our wireless

communication functions. To ensure a better performance of the communication system, we may

also make changes to our two basic function blocks to make it more suitable to the new

environment, or to gain a higher efficiency when they work.

The transmitter side:

Subsystem 2 – image processing

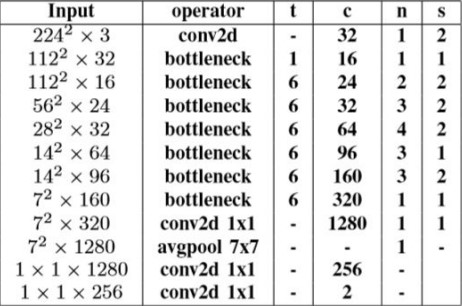

Fire identification (Haiyu Wu)

One of the main parts of this project is to identify if there is a fire in the image/video captured by

the camera. In this part, my approach is using a neural network. Neural networks are the most

powerful tool in the image classification which is also fit in our case.

However, normally, convolutional neural networks (CNN) needs to be supported by a high

computational equipment which is impossible for us to use in an embedded device. Fortunately,

there is a lightweight network structure named mobilenet. It’s structure allowed us to embed it

into a Raspberry Pi 4 and still have a high classification accuracy.

When we tried to train the neural network, there was another challenge. To make our neural

network have high classification ability, we need to use a huge image dataset (like over 100

thousand images). However, our project is related to wildfire which is unique and special so that

it is hard to collect such a huge dataset. Therefore, we tried to use another useful tool called

transfer learning. It is pre-trained by using the IMAGENET data source, then we use our special

dataset to re-train it to improve the classification ability in our own case.

● Number of images: 1102 with fire and 1102 without fire for training. 305 with fire and 49

without fire for testing.

● Training time: 30 mins with GTX 1080ti.

● Identification time: 0.025s per batch (16 images)

● Resolution: 224 * 224

● Accuracy: 96.4%

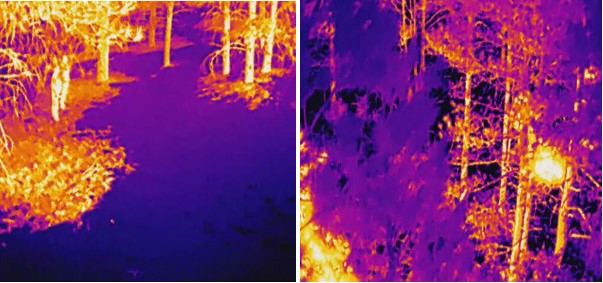

Thermal image:

● Number of images: 998 with fire and 969 without fire for training. 224 with fire and 112

without fire for testing.

● Training time: 15 mins with GTX 1080ti.

● Identification time: 0.032s per batch (16 images)

● Resolution: 224 * 224

● Accuracy: 64.2%

Results:

- For the normal images, our network works well in fire identification and the speed is very

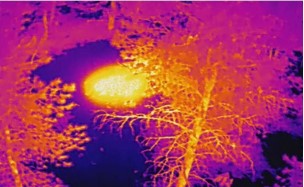

fast which could be real-time. - For the thermal images, the accuracy is really low. The main reason we figured it out is

when we take the thermal videos with a FLIR camera, it will automatically adjust the

color (shown below). The features of these two images are really close, which is hard for

our computer to extract the unique feature for fire and no fire.

The second part of the image processing aspect of our project was image segmentation. This

would pinpoint the location of the fire to the user on the image that is presented to him/her. We

decided to use Matlab due to it’s built-in image processing library. We decided to use Matlab

because we primarily wanted to implement the image segmentation on the ground station,

However our Matlab code can easily be adapted into Octave which will allow us to implement it

in the Raspbian OS.

I exclusively decided to use thermal images with the “Fusion” color palette (Figure 1) for image

segmentation, as the diversity of colors helped identify hotspots more effectively . I decided I

had two different approaches first by trying to use the complete RGB and the second approach

was to convert the original image to a binary image.

Source: The User Manual