|

|||||||||||||||||||||||||||||

STORY

Introduction

This work introduces my initial experiment to study Artificial Perception in Self-Driving technology. Vehicle Artificial Perception is known as a capability that helps Self-driving cars to understand the surrounding environment through a computer based-system. The system can consist of several different sensors such as Cameras, Lidar, Radar, GPS, IMU…to gather information around the car. An intelligent software then processes the data collected from the sensors to recognize and classify surrounding objects such as cars, humans, road marks, traffic signs…. Based on the understanding of the detected objects, the intelligent software can predict behavior and plan appropriate reactions according to the situations.

Creating such an intelligent software has been a challenge for Artificial Intelligence researchers for decades. However, Deep Learning has recently offered a promising solution in the field of Artificial Intelligence, in which Deep Learning software has the ability to learn to create its own Artificial Neural Networks. The developers' job now is to provide data to train Deep Learning software and evaluate the evolution of its neural network. As the result, the intelligent algorithms are now generated themselves by data-driven training method.

There are several Deep Learning frameworks such as Microsoft CNTK, Tensor Flow, Theano, Nvidia, Caffe .. currenty offered to help training and creating Deep Learning Software. However, the data source needed to provide the training process is still lacking. That is also one of the motivations for creating this experimental system. By collecting real-world data while the vehicle operates, these data will be used as the fuel for Deep Learning research.

Building reliable hardware and software for Artificial Perception systems is the key to promote the Self-Driving technology. The purpose of this work is to create an experimental system that allows experimentation and research on Vehicle Artificial Perception. This work is in the early stages but I hope these experiences will be useful for people with the same passion.

System Overview

Artificial Perception system is one of the most important systems in Self-driving cars, in which system decisions can make the difference between life and death. The system needs to be designed with the highest safety standards. In my opinion, such a system should be designed with the following criterion:

The system should be divided into modules in which each module is responsible for performing a specific task to increase its performance. The Sensor Acquisition Module, for example, is responsible for communicating and receiving data from sensors, while the Main Module will make predictions and make decisions to send to the engine's control module. Software should be implemented in a real-time operating system, in which the system response time can be predicted with high accuracy. The modules also need to communicate with each other and with the entire system through a high-speed and reliable network to collaborate effectively. In addition, the system should be designed as redundancy. For example, the main module and some essential sensors such as cameras and radar are the most important and they need to be doubled in case of any failure that the system cannot be recovered in time.

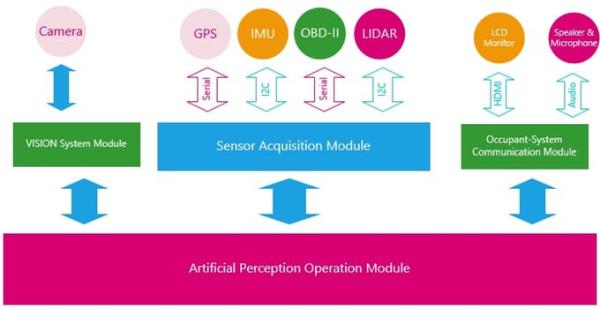

To test the design specifications, an experimental model has been proposed as the above diagram. This experimental system includes 4 main modules: The Sensor Acquisition Module, the Vision Module, the Occupant-System Communication Module and the Artificial Perception Operation Module. The Sensor Acquisition Module is designed to acquire data from the sensors. In this system, some affordable sensors such as the Lidar, GPS, IMU and Speedometer were used to help the system get information about the vehicle's movements and surroundings. The Vision Module Camera consists of one or more Cameras and a computer with software designed to identify and classify objects captured on the images. Although the camera is also a sensor, it is not integrated into the Sensor Acquisition Module since image processing techniques require high computation power. Moreover, transmitting such a huge amount of high resolution image data over the network can be time-consuming and it can cause network congestion and slow down the system operation. Data from the Sensor Acquisition Module and Vision Module is then transmitted through a communication network to an Artificial Perception Operation Module, which is a powerful computer with an intelligent software capable of predicting the behavior of surrounding objects. This information is then sent to the Self-driving system to drive the car safely. In addition, an Occupant-System Communication Module is also designed to test the interaction between the Occupant and the system.

Sensors

Sensors are the essential components of Artificial Intelligence Systems, without sensors the system becomes useless. Most of the sensors in the Artificial Intelligence System learn about their surroundings by scanning with their own techniques. Cameras capture light reflected from distant objects into a matrix of light-sensitive cells, digitizing those light intensity into pixel matrices. Unlike cameras which only passively collect the light , Lidar sensors actively emit laser beam to detect objects in the emitting direction. Lidar sensors also measure the distance between the vehicle and the object based on the time interval of reflected laser beam. Also using object detection based on reflection technique; however, radar sensors use electromagnetic waves to scan objects. Sound waves can also be as effective as laser beam and electromagnetic wave in scanning distant objects. Sonar sensors generate sound waves and listen the acoustic waves bouncing back from the object to detect and measure the distance.

Each sensor has its advantages and disadvantages. For cameras, the subject is invisible if it does not emit light or is blocked by precipitation or snowfall. However, compared to radar or Lidar, cameras offer rich detail information from captured images as it can scan at a wider range and faster rate, though the distance may not be far off. In my opinion, a reliable Artificial Perception system should combine the advantages of these sensors.

In this project, the sensors used are affordable and can be easily found in the market. Although they are not dedicated to high accuracy applications and operate in harsh environments like in driving conditions, they are pretty quite suited for study and research purposes. At the moment, the project is emphasizing more on hardware design and learning about the concept of the system. However, the hardware is still designed to allow for testing of other sensors when available. The following sensors are being tested in this system.

Read More: Vehicle Artificial Perception-Building Experimental Systems