Introduction

The increasing significance of big data analysis is evident in various scientific and engineering fields. Handling vast datasets often leads to slow execution times for computationally demanding algorithms, such as the 3-D Fourier transform or 3-D prestack migration in geophysics (Yilmaz, 2001). Furthermore, challenges arise when datasets surpass the available random access memory (RAM) in a computing system. In such cases, the data must be divided into smaller subsets and processed individually, further extending the processing time. As datasets grow and Moore's Law approaches its limits (Kumar, 2015), traditional sequential algorithms are proving progressively inefficient for managing state-of-the-art parallel and distributed data analysis tasks.

One viable approach to enhance the efficiency of seismic processing involves the creation of parallel and distributed algorithms. Examples include the distributed-memory Fast Fourier Transform (Gholami et al., 2016) and parallel Reverse Time Migration (Araya-Polo et al., 2011). With the growing prevalence of parallel and distributed computing, the demand for their study is also on the rise. In this project, we constructed a distributed computing system using four Raspberry Pis and conducted tests using straightforward parallel and distributed algorithms.

Contextual details

In this section, we will elucidate the principles behind parallel computing, distributed computing, and the Raspberry Pi platform.

Parallel processing

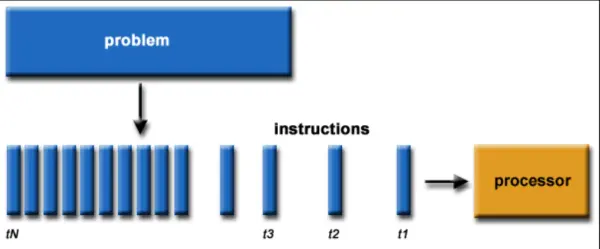

Conventionally, algorithms follow a sequential execution pattern (Figure 1):

- A problem is broken into a discrete series of instructions

- Instructions are executed sequentially

- All instructions are executed on a single processor

- Only one instruction is executed at any moment in time

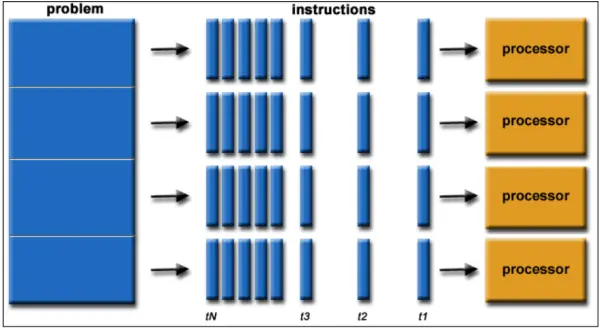

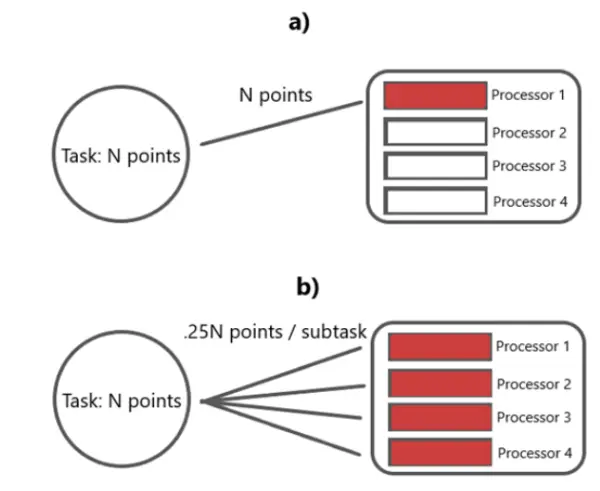

Parallel computing involves the concurrent utilization of multiple computing resources to solve a computational problem (Figure 2):

- A problem is broken into discrete parts that can be solved concurrently

- Each part is further broken down to a series of instructions

- Instructions from each part execute simultaneously on different processors

- An overall control/coordination mechanism is employed

By breaking a task into X subtasks and executing them concurrently, the execution speed can be enhanced by a factor of X.

Distributed processing

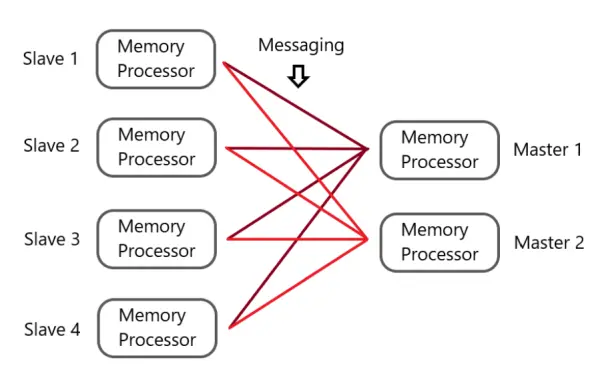

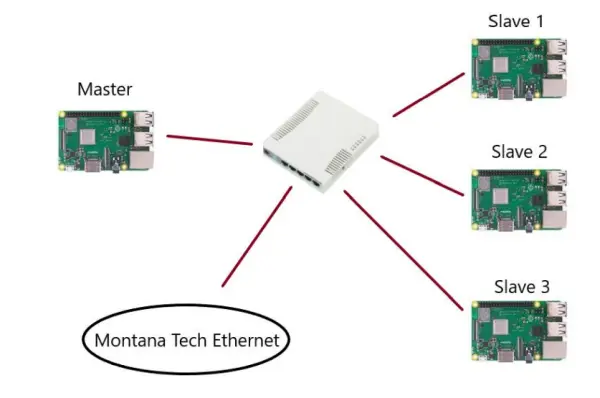

A distributed computing system comprises both hardware and software components distributed across multiple networked computers. These components communicate and coordinate their actions by exchanging messages, working collaboratively to accomplish shared objectives or tasks (Steen & Tanenbaum, 2017). A typical distributed architecture is illustrated in Figure 3:

In a distributed system, every node comprises both a memory component (RAM) and a processor component (CPU). The master nodes take on the responsibility of distributing and coordinating data and tasks throughout the system, while the slave nodes carry out the tasks delegated to them by the master nodes. The primary objective of a distributed system is to make optimal use of the collective memory and processing capabilities of various computers to solve computational problems with efficiency and fault tolerance. In other words, even if a few nodes encounter failures, the system should continue to operate seamlessly.

Raspberry Pi

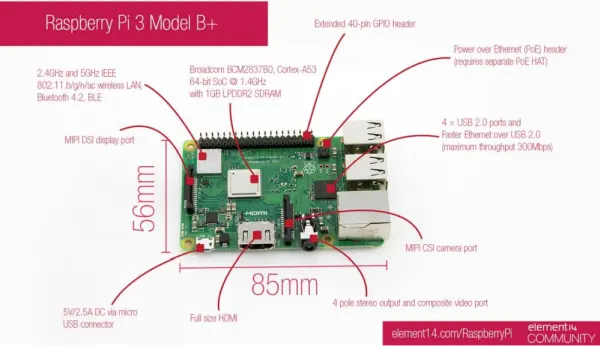

A Raspberry Pi (RPi) is a compact, cost-effective single-board computer, roughly the size of a credit card. It can run Linux and various lightweight operating systems designed for ARM processors. ARM processors are part of a family of multi-core CPUs founded on the RISC (reduced instruction set computer) architecture developed by Advanced RISC Machines (ARM). Figure 4 provides an in-depth breakdown of the components found in the RPi 3 Model B+.

In this project, we utilize RPis, which come equipped with 1GB of RAM and a quad-core CPU running at 1.4GHz, all built on a sturdy motherboard. RPis demonstrate excellent compatibility with each other, allowing for easy scalability by simply adding more RPi units. After carefully evaluating various alternatives, such as the Qualcomm DragonBoard and ASUS Tinker Board, we concluded that the RPi was the ideal choice for this project due to its favorable balance of cost-effectiveness, functionality, portability, and scalability.

Building the system

Components and Costs

The comprehensive expenditure for this project amounts to $262.71, as outlined in Table 1. It's worth noting that we were able to make use of an existing monitor with an HDMI-VGA cable, as well as a keyboard and mouse already available in the lab room, thereby incurring no additional costs for these components.

Table 1: Components and Costs

| Components | Quantity | Unit

Price |

Cost |

| Element14 Raspberry Pi 3 B+ | 4 | $38.30 | $153.20 |

| Ethernet Cable 5-pack | 1 | $18.00 | $18.00 |

| USB 2.0 Cable 2-pack | 2 | $4.00 | $8.00 |

| 16 GB MicroSDHC Flash Card | 4 | $4.00 | $16.00 |

| TP-Link 5 Port Fast Ethernet Switch | 1 | $11.99 | $11.99 |

| Dual USB Wall Charger (2-pack) | 1 | $8.00 | $8.00 |

| 15-piece Heatsink pit | 1 | $8.00 | $8.00 |

| USB Micro Charger DC 5V 3A | 4 | $9.88 | $39.52 |

| Total | $262.71 |

Overall Structure

The essential elements required for constructing a computing cluster include:

- Computer hardware

- Operating System (OS)

- An MPI (Message Passing Interface) library

- An Ethernet switch

Figure 5 illustrates the primary network configuration. The system design comprises four RPi nodes, a five-port Ethernet switch, the Raspbian operating system, and MPICH with a Python wrapper known as MPI4PY. The RPis were connected to the Montana Tech network through the Ethernet switch.

Operating Environment

In this project, we evaluated two distinct operating systems suitable for the Raspberry Pi: Raspbian and Arch Linux ARM. Raspbian is a Debian-based OS specifically tailored for the RPi. It comes with a pre-installed full desktop environment and an extensive collection of precompiled packages, making it an excellent choice for newcomers to RPi and Linux. However, one drawback of Raspbian is its bulkiness due to the inclusion of numerous unnecessary packages, which can result in slower installation and startup times.

However, Raspbian adopts a comprehensive approach, whereas Arch Linux ARM follows a minimalist philosophy. In the default installation of Arch Linux ARM, users are provided with a stripped-down, minimal environment that boots to a command-line interface (CLI) with network support in a swift 10 seconds. Arch Linux empowers users to begin with a clean, rapid setup and selectively install packages as required, allowing for system optimization for various purposes, such as data processing or system administration. One drawback of Arch Linux is its steeper learning curve, particularly for new users who must manually install many packages and their dependencies using an unfamiliar command-line interface (CLI).

Given that the primary objective of this project is to delve into RPi, Linux, and parallel and distributed computing, we opted for Raspbian as the operating system for our system. Arch Linux could be an alternative for users with a higher level of proficiency in the Linux operating system.

MPI and programming language

MPICH is a high-performance and extensively compatible implementation of the MPI standard, known for its robustness. We selected MPICH due to its support for both synchronous and asynchronous message passing. Additionally, the installation process of MPICH and its Python wrapper, mpi4py, on the RPi is rapid and straightforward. To ensure MPICH's proper functioning, it necessitates that all nodes possess password-less Secure Shell (SSH) access to one another. This was accomplished by generating a set of public-private keys for each node and subsequently distributing the public keys of all nodes into the authorized keys list of each node.

The selection of Python as the programming language was a natural decision, primarily owing to its widespread usage in the scientific computing realm and our personal proficiency with the language. Python stands out as an ideal choice for swift prototyping, thanks to its syntax and its interpretation and dynamic typing characteristics. It's worth noting that Python does come with certain performance trade-offs, such as the absence of type safety and relatively slower execution times. Nevertheless, for the objectives of this project, Python proved to be entirely adequate.

Testing the system

After constructing the system, our next step involved evaluating its fundamental functionality using a straightforward MPI program. Following this initial test, we conducted a Monte Carlo simulation using three distinct methods (sequential, parallel, and distributed) and compared the performance outcomes across these methods.

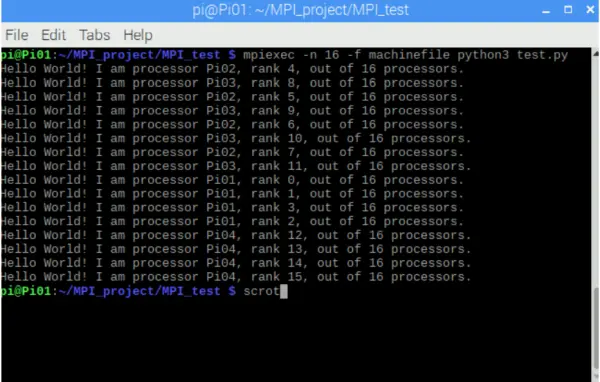

Functionality testing

A basic Python test script, displaying a “hello world” message, was transmitted via MPI from the master node to the slave nodes. Each of the 16 processors within the network was required to acknowledge the master, confirming their correct operation (as depicted in Figure 6).

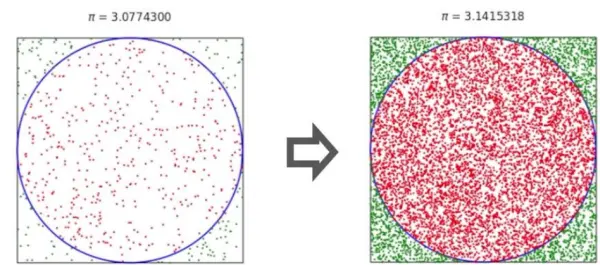

Monte Carlo approximation of Pi

Monte Carlo methods constitute a diverse category of computational algorithms that hinge on iterative random sampling to derive numerical outcomes. The fundamental concept behind Monte Carlo methods is the application of randomness to address problems that may otherwise exhibit deterministic characteristics. In this project, we harnessed a Monte Carlo algorithm to estimate the value of π, as illustrated in Figure 7.

The steps are:

- Produce a point with random coordinates within the unit square.

- Determine whether the point lies within the circle inscribed within the unit square and document the outcome.

- Iterate through steps 1 and 2 a total of N times.

- Tally the number of points that land within the circle inscribed within the unit square (n).

- Derive the value of Pi through:

- 𝜋/4 = 𝑛/N → 𝝅 = 𝟒 × 𝒏/N(for very large N)

This algorithm exhibits strong parallelizability since each generated point operates independently of all other points.

Parallel Processing on a single RPi

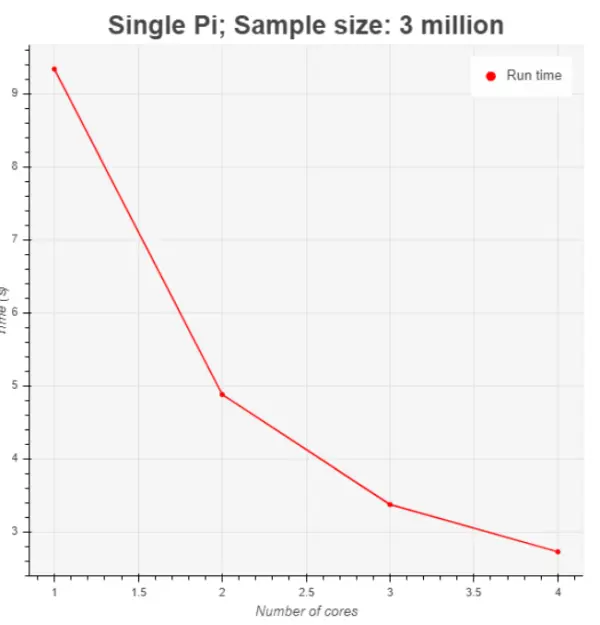

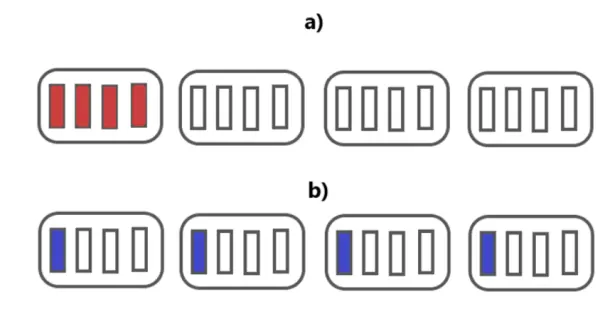

The second testing phase involved the Monte Carlo simulation. Initially, we executed the Monte Carlo algorithm with a sample size of 3 million sequentially, which means only one processor was responsible for the task. Subsequently, we leveraged the Python multiprocessing library to parallelize the task into 2, 3, and 4 subtasks, with each subtask being processed on a distinct processor (or core) within a single node (as depicted in Figure 8). In all cases, the sample size remained at 3 million.

Before commencing the experiment, our anticipation was that parallelization would lead to a significant reduction in the runtime of the Monte Carlo algorithm. This expectation was based on the notion that the computational load would be distributed across multiple processors instead of relying on a single processor. The outcomes (as illustrated in Figure 9) validate this hypothesis, revealing a nearly linear enhancement in runtime as more cores engaged in distributing the workload.

Parallel vs Distributed Computing

Following an evaluation of the runtime performance of both sequential and parallel algorithms within a single node, our exploration extended to the distribution of tasks and data throughout the network. Initially, we aimed to assess the impact of converting the data into TCP/IP format and transmitting it between the network nodes bidirectionally. Subsequently, we allocated the task to all 16 nodes within the network to showcase the advantages of harnessing the full spectrum of available computing resources.

4 cores: single node vs four nodes

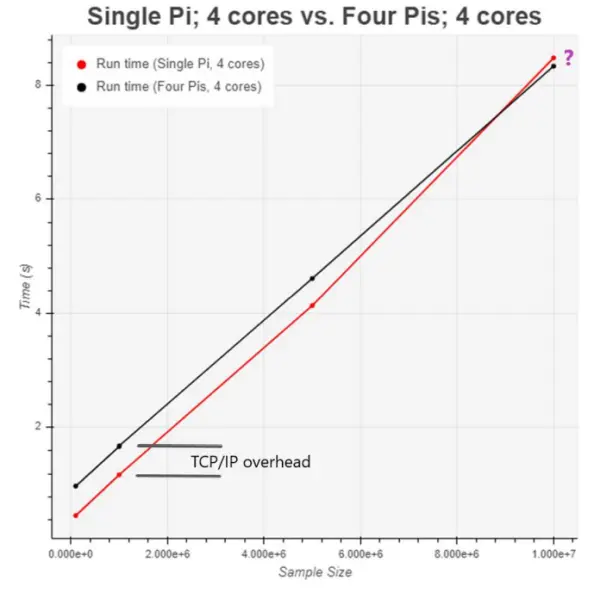

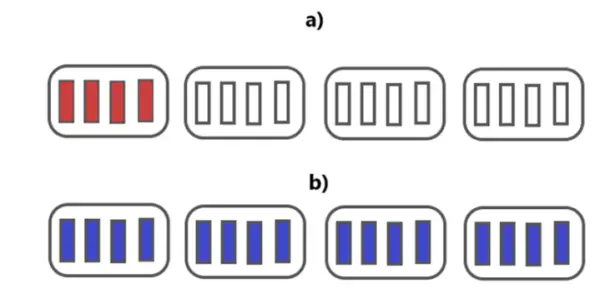

To assess the impact of data conversion and transmission, we fragmented a Monte Carlo simulation into four subtasks. Subsequently, we conducted a performance comparison between running these four subtasks on a single node and distributing them across four distinct nodes (as depicted in Figure 10). We employed sample sizes of 100 thousand, 1 million, 5 million, and 10 million for these evaluations.

The outcomes, as displayed in Figure 11, aligned with our expectations for the first three data points. The overhead associated with data conversion and transfer led to an increased runtime when we distributed the subtasks across the network. However, the results for the final simulation, with a sample size of 10 million, were unexpectedly favorable. Surprisingly, distributing the four subtasks across different nodes exhibited better performance than processing them on a single node.

A plausible explanation for this phenomenon could be attributed to the fact that for computationally-intensive tasks, especially with large sample sizes, a single RPi might generate excessive heat, causing thermal throttling and consequent performance degradation. This issue would be mitigated by distributing the subtasks across the network. Regrettably, due to time constraints, we were unable to empirically validate this hypothesis.

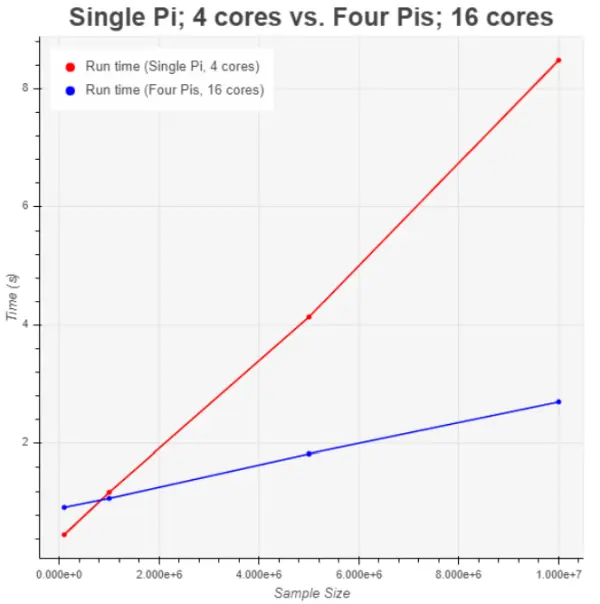

16 cores vs 4 cores

In the concluding experiment, our aim was to illustrate the advantages of a completely distributed and fully parallel system that maximized the utilization of all available computing resources. To accomplish this, we conducted a performance evaluation by contrasting the execution of 16 subtasks distributed across the network with the processing of 4 subtasks within a single node, as depicted in Figure 12.

The outcomes, as illustrated in Figure 13, reveal a remarkable enhancement in runtime when the involvement of the other three nodes in distributing the workload is considered. In the case of a sample size of 10 million, the runtime improved by nearly a factor of 4.

Challenges encountered

Our initial challenge arose during the installation of software packages. Initially, we attempted to compile most packages from their source code, a process that proved exceedingly tedious and error-prone. We encountered numerous compatibility issues stemming from missing dependencies, necessitating a complete reformatting of the SD cards and a fresh installation of the operating systems. Subsequently, we learned that we could streamline the process by utilizing the Advanced Package Tool (APT), which automates package installation in standard directories and manages dependencies. The only drawback to using APT is a lack of customization options.

Another hurdle we confronted involved providing adequate power to the RPis. Initially, our power sources could only supply 1.1 A to each RPi, which proved insufficient for executing computationally-intensive tasks. Whenever we attempted such tasks, our master node repeatedly rebooted. To address this issue, we successfully resolved it by employing more robust power sources capable of delivering 2.0~2.5 A to each RPi.

Conclusions

We effectively constructed and validated the functionality of a computing cluster comprising four Raspberry Pis. Furthermore, we showcased the advantages of task and data parallelization and distribution using this cluster. Our cost-effective cluster represents a resilient distributed system, well-suited for various training and research endeavors in the domain of parallel and distributed computing.

Looking ahead, our aim is to expand the system to accommodate hundreds of processors. To accomplish this, we will need to devise a physical framework capable of housing the RPis and establish a dependable power supply mechanism for the system. Additionally, we will explore potential optimizations for this prototype, which may include implementing a more streamlined operating system and/or employing a more high-performance programming language.