This sketch demonstrates an example of accelerometer data processing using a classification tree. The classifier code was generated using the Python script classifier_gen.py using recorded and labeled training data. The underlying classification tree was automatically generated using the Python scikit-learn library.

The purpose of the classifier is to categorize a multi-dimensional data point into an integer representing membership within a discrete set of classifications. In the sample model, the data is two-dimensional for clarity of plotting the result. The data points could be extended to higher dimensions by including multiple samples over time or other sensor channels.

There are a couple of steps to using this approach in your own system.

- Decide how to create different physical conditions which produce meaningful categories of data.

- Decide what combination of sensor inputs and processed signals might disambiguate the categories. This will constitute the definition of each data point.

- Set up a program with the data sampling and filtering portion of your system as a means to recording real-world data.

- If your system can support a few extra user inputs, the data collection process will be easier if the data can be labeled while it is being collected. E.g., adding a ‘Record’ button and some category buttons could support emitting labeled data directly from the Pico. (This was not done in the sample code below).

- Record data from the real system under the different conditions.

- Trim the data as needed to remove spurious startup transients or other confounding inputs.

- Run classifier_gen.py to process the training data file into code.

- For 2-D data, inspect the plot output as a sanity check. You may wish to tune the modeling parameters or adjust your data set and regenerate the model.

- Incorporate the final classifier code in your sketch.

- Decide whether the classifier output needs additional processing, e.g. debouncing to remove spurious transients.

Sample Model

The sample model was built by recording accelerometer data generated by this sketch under five different physical conditions. The individual files were concatenated into a single training.csv file.

This particular example is somewhat contrived, since a reasonable two-dimensional classifier could be built by hand after inspecting the data. But this can be significantly harder in higher dimensions, e.g., if each data point were extended to include a few samples of history.

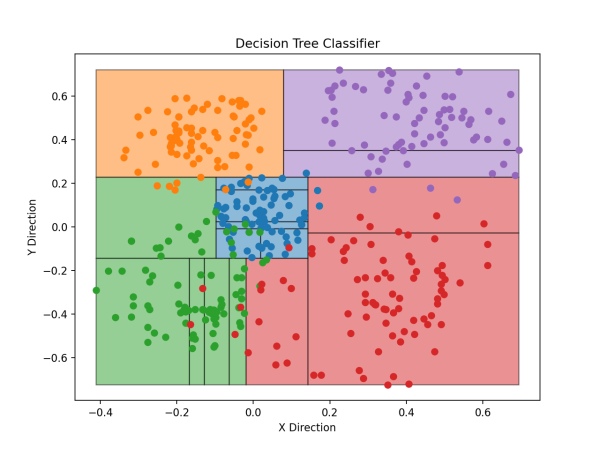

The binary classifier tree drawn over the training data. Each point represents one sample of X and Y accelerometer tilt values. Each color represents a labeled class: blue for ‘center’, and the other colors for diagonal directions. The black lines represent the binary splitting lines subdividing sample regions; each splitting line corresponds to a if/else block in the classifier code or a node in the tree data.

Related files:

demo.py

Direct downloads:

- demo.py (to be copied into

code.pyon CIRCUITPY) - linear.py (to be copied to CIRCUITPY without changing name)

- classifier.py (to be copied to CIRCUITPY without changing name)

# demo.py :

# Raspberry Pi Pico - Decision tree classifier demo.

# No copyright, 2020-2021, Garth Zeglin. This file is explicitly placed in the public domain.

# The decision tree function is kept in a separate .py file which

# was generated from data using classifier_gen.py.

# Import CircuitPython modules.

import board

import time

import analogio

# Import filters. These files should be copied to the top-level

# directory of the CIRCUITPY filesystem on the Pico.

import linear

# Import the generated classifier, which should copied into CIRCUITPY.

import classifier

#---------------------------------------------------------------

# Set up the hardware.

# Set up an analogs input on ADC0 (GP26), which is physically pin 31.

# E.g., this may be attached to two axes of an accelerometer.

x_in = analogio.AnalogIn(board.A0)

y_in = analogio.AnalogIn(board.A1)

#---------------------------------------------------------------

# Run the main event loop.

# Use the high-precision clock to regulate a precise *average* sampling rate.

sampling_interval = 100000000 # 0.1 sec period of 10 Hz in nanoseconds

next_sample_time = time.monotonic_ns()

while True:

# read the current nanosecond clock

now = time.monotonic_ns()

if now >= next_sample_time:

# Advance the next event time; by spacing out the timestamps at precise

# intervals, the individual sample times may have 'jitter', but the

# average rate will be exact.

next_sample_time += sampling_interval

# Read the ADC values as synchronously as possible

raw_x = x_in.value

raw_y = y_in.value

# apply linear calibration to find the unit gravity vector direction

calib_x = linear.map(raw_x, 26240, 39120, -1.0, 1.0)

calib_y = linear.map(raw_y, 26288, 39360, -1.0, 1.0)

# Use the classifier to label the current state.

label = classifier.classify([calib_x, calib_y])

# Print the data and label for plotting.

print((calib_x, calib_y, label))

# Print a .CSV record while recording training data.

# print(f"4,{calib_x},{calib_y}")

classifier.py

# Decision tree classifier generated using classifier_gen.py

# Input vector has 2 elements.

def classify(input):

if input[1] <= 0.22766199707984924:

if input[0] <= 0.14285700023174286:

if input[1] <= -0.1444310024380684:

if input[0] <= -0.01863360032439232:

if input[0] <= -0.06335414946079254:

if input[0] <= -0.16770199686288834:

return 2

else:

if input[0] <= -0.1279505044221878:

return 2

else:

return 2

else:

return 2

else:

return 3

else:

if input[0] <= -0.09689449891448021:

return 2

else:

if input[1] <= -0.0073440102860331535:

if input[0] <= 0.018633349798619747:

return 0

else:

return 0

else:

if input[1] <= 0.17135900259017944:

if input[1] <= 0.024479600600898266:

return 0

else:

return 0

else:

return 0

else:

if input[1] <= -0.028151899576187134:

return 3

else:

return 3

else:

if input[0] <= 0.07950284983962774:

return 1

else:

if input[1] <= 0.35006099939346313:

return 4

else:

return 4

# tree data

children_left = [ 1, 2, 3, 4, 5, 6,-1, 8,-1,-1,-1,-1,13,-1,15,16,-1,-1,19,20,-1,-1,-1,24,

-1,-1,27,-1,29,-1,-1]

children_right = [26,23,12,11,10, 7,-1, 9,-1,-1,-1,-1,14,-1,18,17,-1,-1,22,21,-1,-1,-1,25,

-1,-1,28,-1,30,-1,-1]

feature = [ 1, 0, 1, 0, 0, 0,-2, 0,-2,-2,-2,-2, 0,-2, 1, 0,-2,-2, 1, 1,-2,-2,-2, 1,

-2,-2, 0,-2, 1,-2,-2]

threshold = [ 0.227662 , 0.142857 ,-0.144431 ,-0.0186336 ,-0.06335415,-0.167702 ,

-2. ,-0.1279505 ,-2. ,-2. ,-2. ,-2. ,

-0.0968945 ,-2. ,-0.00734401, 0.01863335,-2. ,-2. ,

0.171359 , 0.0244796 ,-2. ,-2. ,-2. ,-0.0281519 ,

-2. ,-2. , 0.07950285,-2. , 0.350061 ,-2. ,

-2. ]

Development Tools

The development of this sketch involves several other tools which are not documented:

- A Python script for generating a classifier using scikit-learn: classifier_gen.py

- A set of recorded training data files: data/

The Python scripts use several third-party libraries:

- SciPy: comprehensive numerical analysis library

- scikit-learn: machine learning library built on top of SciPy

- Matplotlib: plotting library for visualizing data