The Raspberry Pi Super Computer was initially constructed for Oracle Open World in October 2019, featuring 1050 Raspberry Pi 3b+ devices organized in four server racks, forming a large square box resembling a famous British police box from a well-known BBC TV series. However, after the event, the Pi Cluster was eventually discarded as e-waste, with some components removed. It remained inactive for two years until May of this year, when it was brought to my garage for a comprehensive refurbishment.

For a chronological account of the creation of the 1050 Raspberry Pi cluster, you can refer to “A Temporal History of The World's Largest Raspberry Pi Cluster.” It all began when Gerald Venzl from Oracle Switzerland brought the 12-node cluster to my attention, and later, Stephen Chin and I collaborated to brainstorm the idea of building a large cluster.

The article delves into how the Pi Cluster faced challenges during the COVID lockdowns and California fires, leading to its eventual disposal as e-waste. However, thanks to Eric Sedlar's intervention, the cluster was saved and ultimately stored at Oracle Labs.

If you prefer a more concise overview, you can watch the original build video of “The World's Largest Raspberry Pi Cluster” by Gerald Venzl, as well as the video “Building the World's Largest Raspberry Pi Cluster,” and “The Big Pi Cluster In My Garage – Part I.”

Furthermore, if you're reading this, it's likely that you'd make a great Luminary. The Luminary program is a recently launched developer group that offers exclusive technical content, free swag, and a supportive community to help you create innovative projects like this cluster. Consider becoming a Luminary today!

Resurrection

Upon the announcement of CloudWorld, we were immediately drawn to the idea of resurrecting The World's Largest Raspberry Pi Super Computer. We proposed several options for its revival:

1. Donating it to the Computer History Museum as a non-functional art piece for Pi-Day.

2. Raffling off a piece of the Pi to win a free Raspberry Pi 3B+ from one of the World's Largest Raspberry Pi Clusters, considering their increased value now.

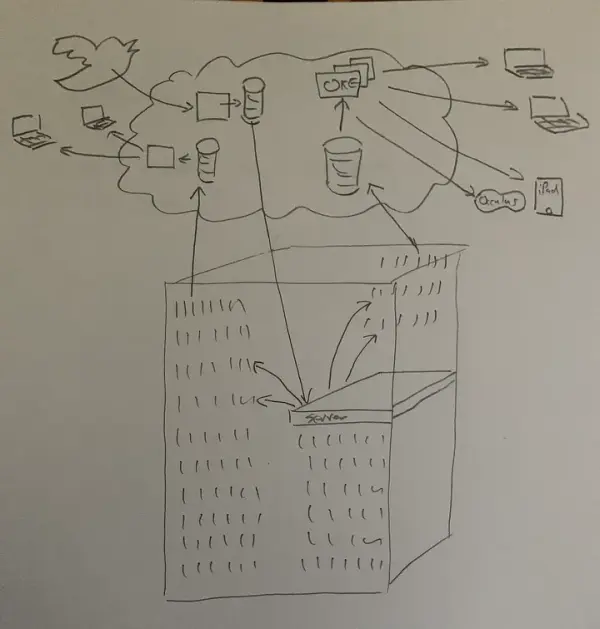

3. Taking this unique opportunity to make history by fixing the Pi Cluster for CloudWorld. We planned to add fans to prevent overheating and demonstrate its capabilities as an “on prem” and “edge” device, showcasing the latest tech developed in our labs. The Pi Cluster would be powered with GraalPython, Java, and Oracle Linux 9, all connected to Oracle Cloud Infrastructure (OCI) through Site-to-Site VPN for on-prem and via micro-services, compute instances, Autonomous Database, Services Gateway, and Load Balancer. We envisioned using AR visualizations and running exciting applications, much like when we previously ran SETI@home on the Raspberry Pi Mini Super Computer, an 84-Pi Cluster. To engage the global developer community, we devised a service that would allow anyone to send code to the cluster for running a workload. Our aim was to showcase our Digital Twin AR/VR services through blogs and videos, inspiring developers and students while showcasing Oracle's Free Tier offerings. Additionally, we had the ambition to secure a Guinness Book world record.

Ultimately, we decided on option three and began the search for a new lab to house the Pi Cluster. We collaborated with leaders from various product teams, Raspberry Pi community experts like Jeff Geerling and Eben Upton, and laid out a comprehensive plan. While we cannot divulge all the details at this stage, the foundation has been set, and there is much more to come. This account narrates the journey of getting the Pi Cluster to DevNucleus at CloudWorld and provides a glimpse of what lies ahead. It's worth mentioning that pursuing a Guinness Book world record comes with significant costs, but we are determined to make this project a remarkable success.

The initial phase involved determining the feasibility of the project by assessing the budget and estimating the required work. Our priorities were as follows:

1. Acquiring multiple fans to ensure efficient cooling.

2. Checking for any missing parts and replacing them as needed.

3. Conducting thorough tests to ensure all components were operational.

4. Developing new software to enhance the Pi Cluster's performance.

Although every Pi network had previously booted with Oracle Linux 7 in 2019, we needed to ascertain if everything still worked as expected. We had to ensure we had all the necessary source code, MAC addresses, and other essential elements to replicate the previous setup.

Next, finding an appropriate workspace for the Pi Cluster proved to be a challenge. The ideal location required double doors, close proximity to a freight elevator, sufficient power supply, ample workspace, and an accessible network. Considering the logistical complexities of working remotely on such a project, we examined various Oracle facilities worldwide. However, it became evident that successfully executing the project remotely would necessitate extensive travel, setup, and continuous on-site assistance. After careful consideration and a discussion with my supportive spouse, we concluded that our garage provided the best solution. Thus, #BigPiClusterInMyGarage initiative began in our own garage, where we had the freedom to work on the project while maintaining ease of access and hands-on involvement.

Adding Fans — Cooling the Raspberry Pi

After the delivery of the Pi Cluster, I immediately began to verify and refine our budget while conducting extensive tests. It became evident that we needed to order replacement parts, primarily fans, to ensure efficient cooling. In total, we acquired 250 new fans, bringing the cluster's total fan count to 257, excluding the fans within switches, servers, and power supplies. To facilitate the installation of these fans, the entire cluster had to be disassembled and reassembled. Although we didn't remove every bolt, each of the 21 Pi 2U banks underwent disassembly, and every 5th Pi was replaced, necessitating the 3D printing of 250 new Pi caddies with brackets for fans. Victor Agreda generously used his newly purchased Ultimaker S5 to print a substantial portion of the fan version caddies.

In addition to installing the fans, we also integrated some easter eggs into the cluster. While sharing the details might diminish their novelty, I will elaborate on them in the Warble section below, as they are too fascinating not to mention. To ensure proper power supply for the fans, we are immensely grateful to Eli Schilling, who played a significant role in creating many of the wiring harnesses.

For a more in-depth account of this process, I have documented some of the work in A Temporal History of The World’s Largest Raspberry Pi Cluster, and there are a few videos available for reference.

Operating System — The Smartest Operating System Around

The significance of a robust operating system often goes unnoticed when building infrastructure and hardware, but Oracle Linux has proven to be of exceptional quality. I delve deeper into the operating system's features and capabilities in A Temporal History of The World’s Largest Raspberry Pi Cluster, where I am also working on guides to enable network booting for Pi devices at home. Building upon our previous work, we needed to upgrade from Oracle Linux 7 to Oracle Linux 9. However, I must share a secret: the upgrade process wasn't entirely straightforward. Nevertheless, the Oracle Linux team has been incredibly supportive and deserves all the credit for configuring the operating system for the Pi Cluster. I encourage you to explore Oracle Linux 9, as it offers significant benefits and is well worth adopting or upgrading to. One notable change during the upgrade was the addition of an extra security layer, resulting in SSH access being disabled for the root account by default.

I must also mention our approach to booting the Pi devices. Each Pi within the cluster network boots from a single, read-only NFS mount on the server, and we have incorporated a systemd service to execute a bash script during server or Pi boot-up. This streamlined configuration makes managing our running processes almost effortless.

Software and Cloud Services — GraalVM is Fast Running on Client or in the Cloud

The software used in our project is entirely open source and can be accessed through our DevRel GitHub repository. However, I must forewarn you that some components are still under development in the labs and are not available even as a tech preview, hence they are not included in the repository. Please be aware that much of the code was written rapidly, so you may find certain aspects that may not seem entirely coherent. Allow me to provide a clarification.

Firstly, let's discuss the software on the cluster. The server operates a Docker container consisting of GraalPython running on top of GraalVM. While the Dockerfile is continuously being enhanced, I would advise against directly copying it at this moment. I've reported some bug findings, and once the Graal environment variables and CTRL+C function correctly (along with a possible new image for GraalPython), you can dive into the project. For now, understand that this is one method of implementation. Notably, running pure Python code under GraalVM has shown a remarkable 8x increase in performance, which is quite impressive.

Each device within the cluster runs a web service. The server broadcasts a UDP message containing its IP address and port, which any device can listen to for auto-discovery and communication with the server. This is an improvement from previous years where the server's IP address was put into an environment variable. Since the IP address doesn't change, hardcoding it could be an option, but there were two concerns. Firstly, anyone working on the project needs to run a test system with their desktop and a simulation of a Pi or a couple of Pis on their desk. Secondly, environment variables are lost when using sudo. The current approach effectively resolves these issues. Once a Pi boots up, it initiates its web server, listens for the UDP message, registers its IP address and MAC address with the server, and awaits tasks. At regular intervals, the server sends a ping to each Pi as a health check to ensure they remain on the list of available Pis.

Every five seconds, each Pi sends all its data (CPU, memory, temperature, etc.) to a REST endpoint on an Autonomous Database, leveraging the native ORDS support. You can find the documentation for this feature here. I've included some code that adjusts the wait time for data transmission if the Pi fails to send its data, with waiting times scaling up to 60 seconds. Remarkably, the Autonomous Database handles the substantial volume of data with ease. I experimented with other databases (unmentioned) and encountered significant difficulties, including delays surpassing five seconds.

Digital Twin with AR/VR — Visualize your Data

I have constructed a highly detailed 3D model of the Pi Cluster, which can be accessed here and opened using your preferred 3D software. Our team of AR/VR and cloud experts, including Wojciech Pluta, Victor Martin, Bogdan Farca, and Stuart Coggins, embarked on creating extraordinary AR and VR experiences. They fine-tuned databases, developed APEX applications, and implemented socket.io for streaming. To ensure scalability without interruptions, they utilized a Kubernetes cluster known as OCI OKE, enabling the 3D experiences to reach a wide audience. As a result, anyone can now bring a Digital Twin of the Pi Cluster into their living room. We equipped iPads with AR capabilities and printed QR codes to enable precise tracking of the cluster within millimeters, providing attendees with an interactive view of the cluster's operations. Additionally, the team has constructed a Meta VR version, with further details to be revealed soon. Given that my garage lacked sufficient power to run the entire cluster, we will integrate and test this configuration before the CloudWorld event. While the outcome remains uncertain, I assure you that it will make for an engaging story. I will include a link here once we have published more information on the AR/VR Digital Twin.

OCI Services — At the Center of Every Project is Cloud Infrastructure

In my garage, I've configured the Pi Cluster as an “on-premises” server. I established an isolated subnet using my Ubiquity Dream Machine Pro, set up a site-to-site VPN to Oracle Cloud Infrastructure (OCI) with the help of a Bastion, and employed a local jump box equipped with two network interfaces – one for the Pi Cluster subnet and the other for the Pi Cluster itself. Consequently, the Pi Cluster is perceived as a single IP address to the external world. The process may sound straightforward and effortless, but it did require some testing and adjustments to ensure cable connections remained secure and stable.

However, during CloudWorld, we won't have control over the network, so the Pi Cluster will be functioning as an “edge device.” In this configuration, it will leverage cloud services such as Services Gateway, Load Balancer, and Domain Management. Remarkably, we even have our own domain, which I will delve into in the following section.

If you have any inquiries or need assistance with setting up or utilizing Oracle's Pi Cluster or any related services, feel free to join Oracle's public Slack channel for developers. There, you can ask any question you have, whether it's about the setup process, recommended services to use, or any other topic related to the Pi Cluster. The community in the Slack channel will be more than happy to help and provide you with the answers you seek!

IoT — Specific Lightweight Tasks with Small Inexpensive Microcontrollers

We have incorporated a pair of Arduinos into the cluster as well. The first Arduino is an Arduino Mega equipped with an Ethernet HAT. The original code for this Arduino can be accessed in this repository. It operates a web service that controls two solenoids, allowing for remote access to the physical reset and power buttons on the server. I have made some modifications to the software, but haven't published the updated version yet. The older version is still available in the repository.

The second Arduino is identical in hardware, but it serves as a REST server that listens for a JSON payload. This Arduino is connected to a dozen NeoPixels located at the top of the police box structure. The NeoPixels provide a dynamic and eye-catching light display that can be controlled via the JSON payload received by the Arduino's REST server.

Warble — A Custom Programming Language Designed for the Pi Cluster

We have set up the domain https://warble.withoracle.cloud on Oracle Cloud Infrastructure (OCI), utilizing Domain Management, a Load Balancer, and a Compute Instance. The Compute Instance is running the same software that powers the Pi Cluster – a Docker Container with GraalPython and a web service. While it's not fully operational at the moment, we expect it to go live during CloudWorld, between October 18th and 20th. Keep an eye on this website or follow us on Twitter for updates, as we believe it will be an enjoyable and exciting experience during the event.

Introducing Warble, our custom programming language designed specifically for Twitter and meant to run on the Pi Cluster. While not a fully-fledged language, Warble is optimized to minimize character usage so that you can post a Warble on Twitter and have it executed on the Pi Cluster. We've set up a Python script running on a Compute Instance, utilizing the Twitter API to search for tweets with the hashtag #pi. If a tweet contains an open curly brace at the beginning and a close curly brace at the end, it qualifies as a Warble and is then stored in a database. Here's an example:

#pi{PRINT(“hello cluster”)}

< hello cluster

When the server has available resources, it retrieves a batch of Warbles from the database and identifies a Pi with CPU utilization below 30%. The chosen Pi then receives the Warble for processing. Warble itself is written in Python and converts Warbles into Python code, executing them accordingly. Currently, it uses Python3, but we are working on a version implemented in GraalPython, leveraging cutting-edge technology that is not yet public-ready. Our research engineers in Oracle Labs, Rodrigo Bruno, and Serhii Ivanenko, are actively preparing it for deployment on the cluster.

As of now, the results of Warble executions are posted to an Autonomous Database via a REST API, making the setup straightforward. Stuart Coggins and Jeff Smith have been instrumental in ensuring the system's stability and reliability. We aim to develop an APEX app that will showcase the Warbles and their outcomes, possibly incorporating a leaderboard for custom visualizations.

Imagine the excitement of determining who can calculate Pi to the most digits using one of the World's Largest Raspberry Pi Clusters!