An autonomous drone that uses deep learning to identify if people are maintaining social distancing or not. High Degree of Red = High Risk.

Inspiration

For a couple of years, we have been building and flying drones. This was something that we enjoyed and considered it as some sort of sport. Last year, our state saw one of the deadliest floods of all time. We were not prepared and a lot of relief activities depended solely on human inspection and interference. This puts a lot of lives in danger. This was an incident that changed the way we perceived flying. We started looking into autonomous flying and was engaged in the development and testing of various AI-based analytics onboard an autonomous UAV. This went on for around 7 months until all activities were paused and our lives were again shaken by the deadly Coronavirus. The initial months were tough. With all activities at a standstill, we had time to rethink our approach. With the law enforcement agents and community health workers risking their lives for the betterment of our society, we decided to ease their work process. The aim is to provide a system that allows for the surveillance and monitoring of the public for social distancing, without the risk of being openly exposed.

What it does

ASMA Drone is an autonomous drone, which uses a normal camera to take a live video stream and use edge computing onboard to understand if people are maintaining social distancing or not.

We built a primary version on top of DJI Tello to test its feasibility, and it worked flawlessly.

We now have a stable flight for version 2.0 and are striving to get the autonomous capability.

How we built it

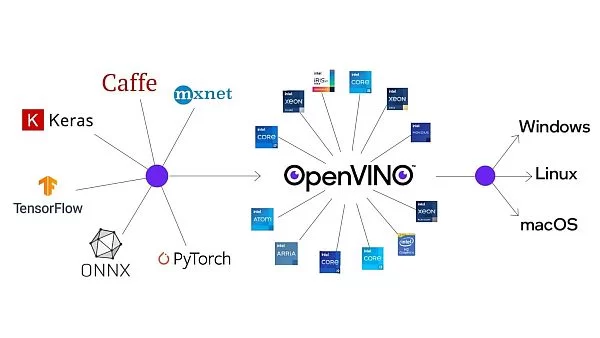

The core of this application uses Convolutional Neural Networks to detect pedestrians. We are working on adding more models, but as of right now, we use the pre-trained SSD MobileNet V2 person-detector-retail-0013 from Intel's OpenVINO Model Zoo which is trained on the MS COCO dataset for the proof of concept. We extract the “person” class of this model as the pedestrian detector.

On the output view, some data visualizations are provided to give the user better insights into how well physical distancing measures are being practiced. The data analytics and visualizations can help decision-makers identify the peak hour, detect the high-risk areas, and take effective action to minimize social distancing violations.

The mobile agent consists of an autonomous UAV. The UAV is small, compact, and fits into a 250mm*250mm*350mm cube. The onboard Raspberry Pi coupled with a 4G module enables remote operation. The system uses rosbridge and roslibjs to set up the remote terminal which helps us to communicate with the UAV from a web browser. The UBlox 7M GPS module with the Ardupilot autopilot stack enables autonomous navigation.

OpenVINO Usage

The deep learning inference on this project is done with the help of OpenVINO, thereby offloading inferencing to an Intel Neural Compute Stick. For the system to scale, we can use Intel MYRIAD FPGAs and still be able to use the same code.

Installation Instructions

All steps for installation are provided in detail in the Github repository.

Challenges we ran into

Initially, we had a hard time setting up the drone due to the delay in the arrival of parts due to the logistics delay caused by the lockdowns imposed due to COVID19. After all the parts arrived, it took us almost 2 days to get the drone to fly completely stable. The self-designed frame was first fabricated using PLA and FDM. This did not have the strength that we hoped it would. The fabrication cycle took a few iterations and this was further delayed due to the pandemic.

In the computation arena, the results we had gotten initially from the Raspberry Pi 4 were extremely poor, as in low FPS output. So to enable edge computing, we incorporated Intel Neural Computer Stick with OpenVINO. Now the performance is much faster.

What we learned

Building this project helped us have hands-on experience in developing autonomous systems, which was limited to simulations before. Various fabrication techniques and assembling skills were also acquired during this development. We also learned to compare various hardware available to us and make an appropriate choice depending on our application and our limitations.

Source: ASMAv2 with OpenVINO