Inspiration

Recently, I've been experimenting with training TensorFlow models on image datasets using Edge Impulse. So while thinking about Halloween, there's a common problem that nearly everyone runs into. Why does it take so long to sort through and organize all of the candy? Because picking up each piece individually and chucking it into its respective pile consumes far too much effort, I wanted to automate the process using the power of machine learning.

Principle of Operation

The theory goes as follows: the user begins by placing a piece of candy onto a platform, where it is then scanned by a camera. That image gets classified by a model which produces a label of what it thinks the candy is. Based on the result, a pile is chosen, and then platform then moves to that location. Once it has arrived, some kind of pushing mechanism shoves the candy into the pile, and the platform then returns and the cycle resets.

Setting Up Edge Impulse

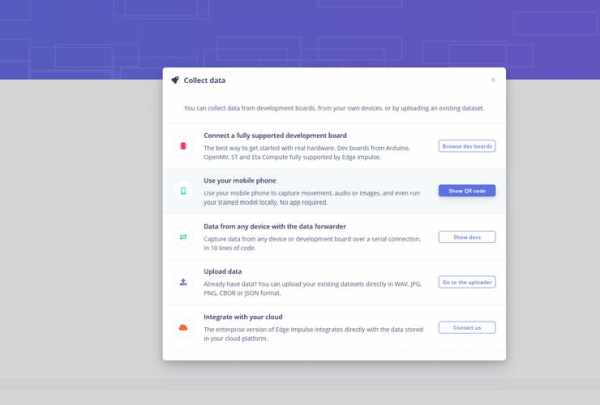

To gather training data and produce a TensorFlow model, I went with Edge Impulse. I began by creating a new project called “candySorter” and then heading to the device page. Edge Impulse has a nice feature where you can import many types of data an automatically infer their labels based on just the filename, so I wrote a simple Python script that takes a picture using the Pi's camera and saves it with the desired label when a button is pressed.

Training and Deploying a Model

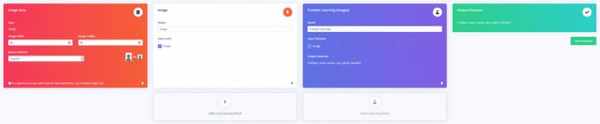

Now that there is a large amount of image data, it's time to train a TensorFlow model on it. For input, I went with an image block that scales the original image to one that is 96×96 pixels large. The processing block just sets the color depth to RGB instead of monochrome, and the training block utilizes transfer learning with the MobileNetV2 model. Output is one of the following labels: kitkat, sour patch, twizzler, or reeses.

After training had finished, I deployed the model as a standalone web assembly library that can be called externally from a Node.js source file.

Processing Images

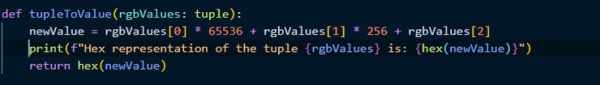

The system works by using a Raspberry Pi 4 to capture an image with the Raspberry Pi Camera module, but the classifier can't understand that format, it needs an array of pixel values of a certain size instead. So the capture gets saved to a BytesIO stream and resized to 96×96 pixels large. It is then converted into a string of flattened pixel values, which takes in a tuple of RGB values and transforms it into a single hex value and appends it to a list.

Classifying an Object

The Node.js source file is called by the subprocess.run() function, and it passes the color data string as an argument to the program where it is then parsed and given to the model. After the model comes up with what it thinks the object is, a JSON string is outputted over stdout, where it is then piped into a Python variable and parsed as a dictionary object. Finally, the maximum value is found and the associated label is extracted and used to determine into which bin the candy should be placed. This bin number is then sent over USB to the awaiting Arduino Mega 2560.