Kindbot packs an app, sensors, voice-control, and state-of-the-art computer vision to eliminate guess work & maximize yields.

Watch Kindbot Grow!: kindbot.io and Twitter: @Kindbot_io Instagram: @kindbot

Plant Diagnosis Service: buddy.kindbot.io

Download Buddy for Android! (coming soon to iOS): Go to Google Play Store

The Dawn of Kindbot

At the turn of 2018, we began developing a plant monitor to explore home grown cannabis in California. Cannabis flowers are valued at an average $320 oz, taking months to develop under ideal growing conditions.

Typically, home grow enthusiasts will set up small spaces or tents where they can hang HID/LED lights, fans for ventilation, AC for temperature control and irrigation pumps for hydroponic feeding.

Growers often rely on timers to coordinate the scheduling for all of these appliances and excess heat tends to be a challenge to maintaining the stability that helps flowers to thrive.

In addition to this equipment, growing great produce requires some domain knowledge in biology & plant science. Forums and pricey apps help to fill the knowledge gap but advice is seldom on-demand and frequently ill-informed either by the bias of how an issue is framed to the advisor or by the experiences of those offering advice.

Environmental controller technology is geared toward commercial applications in pricing and assumptions on the user experience.

In Kindbot, we developed a system to maintain ideal growing conditions which leverages deep learning in environmental control and plant health diagnostics.

To make Kindbot the most powerful and accessible environmental controller, we use a simple hardware design and promote computer vision with the picamera.

Ultimately, we have the ideal platform to support:

- maintaining stable, optimal environmental control

- remote access with photos and dashboard via mobile app

- rich data logging & smart notifications

Building Kindbot

We begin with the compact and flexible Raspberry Pi Zero microcontroller and explored a range of atmospheric sensors including each of the DHT11, Si7021, BME280 before settling on the latter.

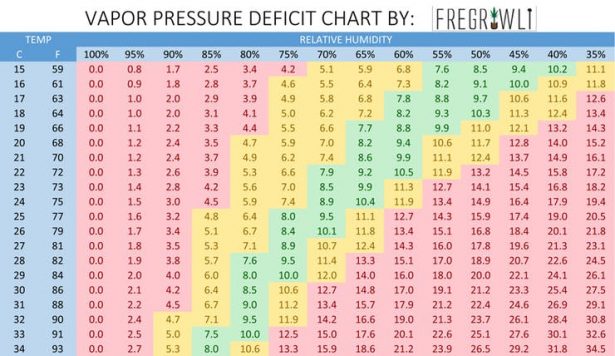

At the bare minimum, we require temperature and humidity readings. From these values, we turn to the Arrhenius equations to calculate the Vapor-Pressure deficit (VPD). This statistic informs a frame of reference contextualizing temperature and humidity in terms of physical pressures driving photosynthesis in the plant.

Informed by this statistic, we can target different ranges to support the natural growth cycles optimally.

By logging our sensor data with sqlite3, we found some sporadic readings to be unreasonably anomalous. For a robust controller, we will need to be aware of this kind of error.

Since our it is our goal to maintain stable growing conditions, we employ a simple ‘temperature continuity in time' assumption. Simply put, we ignore readings which deviate too much over a short time scale.

Once we can trust and log our readings, we can whip up a slackbot to report on current conditions, send SMS notifcations with twilio when conditions fall out of target range, or email reports to get an overview to inspect for any anomaly indicative of a problem.

While designing Kindbot, we've tested the YL-69 sensor to probe soil moisture as well as the TSL-2561 to measure light intensity, before making greater use of the camera to streamline the device profile.

In our efforts to control the most important environmental parameters, we even developed a sister device, the Budtender, to perform pH control and autodosing of nutrients.

While emphasizing a modern, consumer electronics aesthetic over traditional environmental controller equipment, we explored integration with smart home devices. We've dabbled with VUI running a flask-ask server with Alexa Smart Home Skills and flask-assistant Google Home Assistant Actions.

Ultimately, we aim for a very simple design and take our UI mobile in both iOS and android.

Kindbot Cool

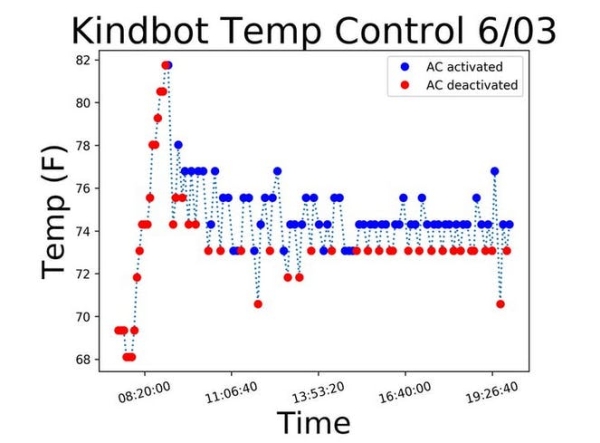

In the end, we want more than logging and notifications, we want control. One simple idea would be to periodically check the latest temperature reading and apply the simple ‘on-off' decision based on whether the temperature exceeds a predetermined threshold. This decision would inform whether our AC is running over the next cycle before we reevaluate in N minutes. However, this often leads to undesirable oscillations in temperature.

The standard approach is to use PID control. Here, is a fine overview on heuristics used to tune your PID models. With some trial and error, we can achieve reasonable stability around the set point of 74 F without too much overshooting when the lights kick on each morning.

We also explored applications of deep learning by reframing our temperature control problem as a game within a reinforcement learning paradigm.

For example, imagine putting an agent into an environment where allowable actions are to turn the AC on or off for the next N-minute cycle. At the beginning of each cycle, we evaluate our state, which may as well include temperature, humidity, and any other important, recent environmental statistics.

Then if we are on target, to within a small tolerance plus or minus the set point, our agent is rewarded. On the other hand, if the temperature is outside the acceptable tolerance, our agent is penalized in a way proportional to the degree of this difference.

An agent like the above can learn a policy function in an online manner to specialize environmental control with the REINFORCE algorithm aka Policy Gradients.